Python News Analysis: How to Build a RAG-Powered Q&A System

Introduction

In today’s hyper-connected world, we are inundated with a constant stream of news from countless sources. Keeping up with specific topics, tracking developments, or finding precise information within this deluge can feel like searching for a needle in a haystack. Traditional search engines offer a list of links, but they don’t synthesize information or answer complex questions directly. This is where the power of Python and modern AI techniques comes into play. By leveraging a sophisticated architecture known as Retrieval-Augmented Generation (RAG), developers can build intelligent systems capable of ingesting vast amounts of news content and providing accurate, context-aware answers to user queries.

This article provides a comprehensive technical guide to building a RAG-powered Q&A system specifically for news analysis. We will explore the core components, walk through a practical implementation using popular Python libraries, discuss advanced optimization strategies, and examine real-world applications. Whether you’re a data scientist, an AI enthusiast, or a Python developer looking to build next-generation information retrieval tools, this guide will provide the foundational knowledge and practical code examples to get you started on your journey to mastering Python news analysis.

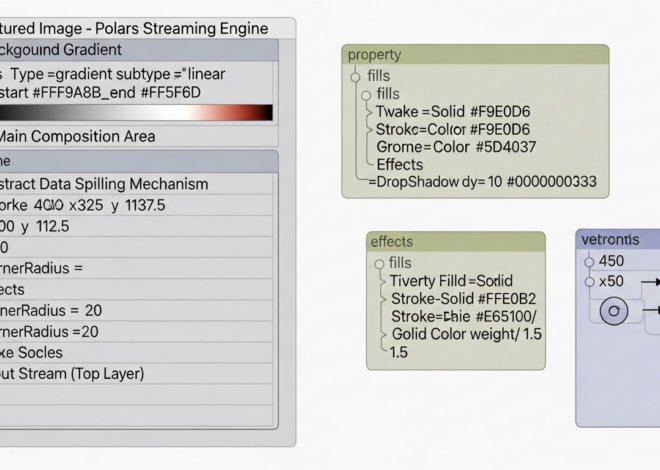

The Anatomy of a News-Focused RAG System

At its core, a RAG system is a powerful fusion of two fundamental AI concepts: information retrieval and natural language generation. Instead of relying solely on the pre-trained knowledge of a Large Language Model (LLM), which can be outdated or lack specific domain knowledge, RAG first retrieves relevant information from a custom knowledge base—in our case, a collection of news articles. This retrieved context is then provided to the LLM, which uses it to generate a precise and factually grounded answer. This approach significantly reduces hallucinations and ensures the responses are based on the provided source material.

What is Retrieval-Augmented Generation (RAG)?

Imagine you ask an expert a question. Instead of answering from memory alone, they first pull out a few relevant reference books, read the key passages, and then formulate an answer based on that specific information. This is precisely how RAG works. The “retrieval” step finds the right “books” (or text chunks), and the “generation” step synthesizes the answer. This is particularly effective for python news applications because news is time-sensitive and constantly evolving. A standard LLM trained a year ago wouldn’t know about last week’s market trends, but a RAG system connected to a database of recent articles would.

The Core Python-Powered Components

A typical news RAG pipeline is built on a stack of specialized Python libraries and services, each playing a critical role:

Keywords:

AI data processing flowchart – AI big model and text mining-driven framework for urban greening …

Document Loading and Chunking: The first step is to ingest the news articles. This can be done by scraping websites with libraries like BeautifulSoup or fetching data from news APIs with requests. Since LLMs have context window limits, these articles are broken down into smaller, manageable “chunks” of text.

Embedding Generation: Each text chunk is converted into a numerical representation called an embedding. These high-dimensional vectors capture the semantic meaning of the text. The sentence-transformers library is a popular and powerful choice for creating these embeddings locally and for free.

Vector Storage and Retrieval: These embeddings are stored in a specialized vector database. Unlike traditional databases that search for exact keyword matches, a vector database like ChromaDB or FAISS finds chunks based on semantic similarity. When a user asks a question, their query is also converted into an embedding, and the database efficiently finds the most relevant text chunks by comparing vector proximity.

The Large Language Model (LLM): This is the generative component. The user’s original query and the relevant text chunks retrieved from the vector database are combined into a detailed prompt. This prompt is then sent to an LLM (e.g., via the OpenAI API or a locally hosted model from Hugging Face), which generates a coherent, human-readable answer based *only* on the provided context.

A Practical Walkthrough: Building a Python News RAG Pipeline

Let’s move from theory to practice. This section provides a step-by-step guide with Python code snippets to build a basic news RAG system. We’ll use sentence-transformers for embeddings and chromadb for our vector store.

First, ensure you have the necessary libraries installed:

pip install chromadb sentence-transformers

Step 1: Data Ingestion and Preprocessing

Our knowledge base will be a collection of news articles. For this example, we’ll use a few sample articles about recent developments in renewable energy. In a real-world scenario, you would fetch this data dynamically. The next crucial step is chunking the text. A simple strategy is to split the text into paragraphs or fixed-size chunks with some overlap to maintain context between them.

# Sample news articles (in a real app, you’d fetch these from an API or scrape them)

news_articles = [

{

“id”: “news_001”,

“content”: “Global investment in renewable energy reached a new high in the last quarter, totaling over $100 billion. Solar power projects led the charge, attracting nearly 60% of the total funds. Experts attribute this surge to falling production costs and increased government incentives aimed at combating climate change.”

},

{

“id”: “news_002”,

“content”: “A breakthrough in battery technology has been announced by researchers at a leading university. The new lithium-sulfur battery boasts twice the energy density of current lithium-ion models and is made from cheaper, more abundant materials. This could revolutionize the electric vehicle market and grid-scale energy storage solutions.”

},

{

“id”: “news_003”,

“content”: “Despite the growth in renewables, challenges remain. Grid infrastructure in many countries is not yet equipped to handle the intermittent nature of wind and solar power. Significant upgrades are needed to ensure a stable energy supply as the world transitions away from fossil fuels. The new battery technology could be a key part of the solution.”

}

]

# Simple chunking function (in a real app, use a more robust library like LangChain’s text_splitters)

def chunk_text(text, chunk_size=150, overlap=20):

words = text.split()

chunks = []

for i in range(0, len(words), chunk_size – overlap):

chunks.append(” “.join(words[i:i + chunk_size]))

return chunks

# Process and chunk all articles

all_chunks = []

metadatas = []

chunk_id_counter = 0

for article in news_articles:

chunks = chunk_text(article[“content”])

for chunk in chunks:

all_chunks.append(chunk)

metadatas.append({‘source_id’: article[‘id’]})

chunk_id_counter += 1

# Create unique IDs for each chunk

chunk_ids = [str(i) for i in range(len(all_chunks))]

print(f”Total chunks created: {len(all_chunks)}”)

Step 2: Generating and Storing Embeddings

Now, we’ll use sentence-transformers to convert our text chunks into vector embeddings and store them in ChromaDB. ChromaDB is a lightweight, open-source vector database that is easy to set up and use in-memory or as a client-server application.

import chromadb

from sentence_transformers import SentenceTransformer

# Initialize the embedding model

model = SentenceTransformer(‘all-MiniLM-L6-v2’)

# Initialize ChromaDB client (in-memory for this example)

client = chromadb.Client()

# Create a collection to store our news chunks

# The embedding_function is automatically handled by Chroma if not specified,

# but explicitly defining it is good practice for clarity.

collection = client.create_collection(“news_collection”)

# Generate embeddings for all chunks

embeddings = model.encode(all_chunks)

# Add the chunks, embeddings, and metadata to the collection

collection.add(

embeddings=embeddings,

documents=all_chunks,

metadatas=metadatas,

ids=chunk_ids

)

print(“Embeddings generated and stored in ChromaDB.”)

Step 3: The Retrieval and Generation Loop

This is the core of the Q&A functionality. When a user asks a question, we embed the query, use ChromaDB to find the most similar chunks, and then construct a prompt for an LLM.

def query_news(question, n_results=2):

# 1. Embed the user’s question

question_embedding = model.encode([question])[0].tolist()

# 2. Query ChromaDB to find the most relevant chunks

results = collection.query(

query_embeddings=[question_embedding],

n_results=n_results

)

retrieved_chunks = results[‘documents’][0]

context = “\n\n—\n\n”.join(retrieved_chunks)

# 3. Construct the prompt for the LLM

prompt_template = f”””

Based on the following news context, please answer the user’s question.

If the context does not contain the answer, state that you don’t have enough information.

Context:

{context}

Question:

{question}

Answer:

“””

return prompt_template, retrieved_chunks

# — Mock LLM Interaction —

# In a real application, you would send this prompt to an LLM API (e.g., OpenAI, Anthropic)

def get_llm_response(prompt):

print(“— PROMPT SENT TO LLM —“)

print(prompt)

print(“————————–“)

# This is a mock response. Replace with actual API call.

if “battery” in prompt.lower():

return “A new lithium-sulfur battery has been announced which has double the energy density of current models and is made from cheaper materials.”

elif “investment” in prompt.lower():

return “Global investment in renewable energy surpassed $100 billion in the last quarter, with solar power attracting the majority of the funds.”

else:

return “I do not have enough information from the provided context to answer that question.”

# Example Usage

user_question = “What is the latest news on battery technology?”

final_prompt, retrieved_docs = query_news(user_question)

answer = get_llm_response(final_prompt)

print(f”\nQuestion: {user_question}”)

print(f”Answer: {answer}”)

Beyond the Basics: Optimizing Your News RAG System

Vector database architecture diagram – Architecture Diagram of Faiss Vector Database | Download …

The basic pipeline is powerful, but for a production-grade system, several optimizations are necessary to improve accuracy, relevance, and efficiency.

Enhancing Retrieval Quality

The quality of the retrieved context is the single most important factor for a RAG system’s performance. If you retrieve irrelevant documents, the LLM will produce a poor or incorrect answer.

Advanced Chunking Strategies: Instead of fixed-size chunks, consider semantic chunking, which splits text based on topical shifts. Libraries like nltk can be used for sentence splitting, ensuring you don’t cut off a sentence midway. Using a small overlap between chunks helps preserve context across boundaries.

Hybrid Search: Vector search excels at finding semantically similar concepts (e.g., “investment growth” and “funding increase”), but it can sometimes miss specific keywords or acronyms. Hybrid search combines traditional keyword-based search (like BM25) with vector search to get the best of both worlds, improving retrieval for queries containing specific terms.

Re-ranking: A common two-stage approach involves first retrieving a larger set of candidate documents (e.g., 20 chunks) using an efficient vector search. Then, a more sophisticated and computationally expensive model (a cross-encoder) is used to re-rank these 20 candidates to find the top 3-5 most relevant ones to pass to the LLM.

Managing Real-Time Python News Feeds

News is dynamic. A system built today is useless if it isn’t updated with tomorrow’s headlines. Managing a continuous feed of python news articles presents unique challenges. Your architecture must support efficient additions, updates, and deletions in the vector database. When a new article comes in, it must be chunked, embedded, and added to the collection. More complex is handling updates to existing articles or retractions, which require deleting the old vectors and inserting new ones. Building a robust data pipeline using tools like Apache Airflow or Celery to automate this ingestion process is crucial for maintaining a fresh and relevant knowledge base.

Vector database architecture diagram – Vector Database used in AI | Exxact Blog

Real-World Applications and Considerations

The applications of a news-focused RAG system are vast and transformative. Financial analysts can use it to query thousands of earnings reports and market news articles to instantly synthesize sentiment and identify trends. Market researchers can track competitor mentions and industry developments. Journalists can use it to quickly find background information and connect disparate events from a large archive of stories. It can even power personalized news assistants that provide daily briefings tailored to a user’s specific interests.

Common Pitfalls and Best Practices

Garbage In, Garbage Out: The performance of your RAG system is fundamentally limited by the quality of your source data. Ensure your news sources are reliable and that your data cleaning process removes boilerplate content like ads, navigation menus, and social media links.

The “Lost in the Middle” Problem: Studies have shown that LLMs tend to pay more attention to information at the very beginning and very end of the provided context. When constructing your prompt, consider placing the most relevant chunks at the start or end to maximize their impact on the final answer.

Cost and Latency Management: API calls to powerful LLMs and embedding services can be expensive and add latency. Implement caching for common queries. For high-volume applications, consider using smaller, fine-tuned open-source models that can be hosted locally to reduce both cost and response time.

Conclusion

Retrieval-Augmented Generation represents a paradigm shift in how we interact with large volumes of information. By combining the vast, pre-trained knowledge of LLMs with the factual grounding of a specific, up-to-date knowledge base, we can build powerful and reliable Q&A systems. For the domain of python news analysis, this technology offers a clear path to taming information overload, enabling users to find precise answers and deep insights from a sea of articles.

As we’ve seen, Python, with its rich ecosystem of libraries like sentence-transformers and chromadb, provides all the necessary tools to build these sophisticated pipelines. From initial data ingestion and chunking to embedding, retrieval, and final generation, Python serves as the ideal language for orchestrating this complex workflow. While the path to a production-ready system involves careful optimization and consideration of real-world data dynamics, the foundational principles and code outlined here provide a solid starting point for anyone looking to build the next generation of intelligent news analysis tools.