Python Performance Profiling and Optimization – Part 5

Welcome back to our comprehensive series on Python performance. In the previous installments, we laid the groundwork by exploring fundamental profiling tools like cProfile and timeit, helping you identify the CPU-bound hotspots in your synchronous code. However, as applications grow in complexity and scale, performance bottlenecks often shift from raw computation to more nuanced areas like memory management and asynchronous I/O handling. Simply knowing which function takes the longest is no longer enough.

In this fifth part, we elevate our analysis to tackle these advanced challenges. We will move beyond the CPU and dive deep into the world of memory profiling to hunt down memory leaks and optimize usage. Furthermore, we’ll navigate the intricacies of profiling modern asynchronous applications built with asyncio, where traditional profilers can be misleading. This guide is designed to equip you with the advanced tools and techniques necessary to diagnose and resolve the subtle yet critical performance issues that plague large-scale Python systems. Let’s sharpen our tools and get ready to transform our applications from functional to truly high-performing.

Beyond CPU: The Critical Role of Memory Profiling

Standard CPU profilers are excellent at answering the question, “Where is my program spending its time?” But they often fail to answer the crucial follow-up, “Why is it spending so much time there?” A function might appear slow not because its logic is computationally expensive, but because it’s allocating vast amounts of memory, triggering frequent and costly Garbage Collection (GC) cycles. In a world of containerized applications and cloud-based deployments, inefficient memory usage doesn’t just slow down your application—it can lead to increased infrastructure costs and even abrupt termination by an Out-Of-Memory (OOM) killer.

Two primary memory-related problems plague applications:

- High Memory Usage (Bloat): This occurs when an application consumes more memory than necessary. This could be due to loading an entire file into memory at once instead of streaming it, or using inefficient data structures (e.g., a list of objects where a generator or a more compact structure would suffice).

- Memory Leaks: This is a more insidious issue where memory is allocated but never released after it’s no longer needed. In Python, this often happens when objects are unintentionally kept alive by lingering references in global caches, closures, or circular dependencies that the garbage collector can’t resolve.

Introducing memory-profiler for Line-by-Line Analysis

One of the most effective tools for understanding memory usage is the third-party library memory-profiler. It provides a simple decorator, @profile, that can be applied to any function to get a line-by-line report of its memory consumption. This fine-grained detail is invaluable for pinpointing exactly which operations are responsible for memory bloat.

To use it, first install the library:

pip install memory-profilerNow, let’s analyze a simple function that creates a large list in a suboptimal way:

# To be run with: python -m memory_profiler your_script.py

from memory_profiler import profile

@profile

def process_data():

"""A function that consumes a significant amount of memory."""

print("Processing started...")

# Create a large list of numbers

large_list = [i * 2 for i in range(10**6)]

# Create a copy, consuming even more memory

list_copy = large_list[:]

# Perform some operation

total = sum(list_copy)

print(f"Processing finished. Total: {total}")

# The memory for large_list and list_copy is released here

return total

if __name__ == "__main__":

process_data()When you run this script using the memory_profiler module, it produces a detailed report:

Line # Mem usage Increment Line Contents

================================================

4 35.4 MiB 35.4 MiB @profile

5 def process_data():

6 """A function that consumes a significant amount of memory."""

7 35.4 MiB 0.0 MiB print("Processing started...")

8 # Create a large list of numbers

9 73.8 MiB 38.4 MiB large_list = [i * 2 for i in range(10**6)]

10 # Create a copy, consuming even more memory

11 112.2 MiB 38.4 MiB list_copy = large_list[:]

12 # Perform some operation

13 112.2 MiB 0.0 MiB total = sum(list_copy)

14 112.2 MiB 0.0 MiB print(f"Processing finished. Total: {total}")

15 # The memory for large_list and list_copy is released here

16 112.2 MiB 0.0 MiB return total

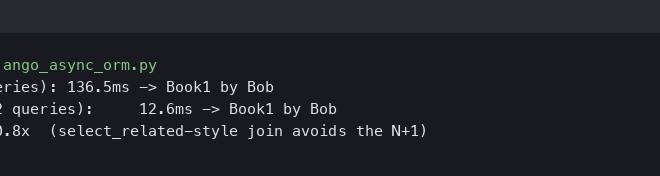

The Increment column is the most important here. It clearly shows that creating large_list added ~38.4 MiB of memory, and copying it added another ~38.4 MiB. This immediately tells us where our memory is being allocated. With this information, we could refactor the code to use a generator expression to avoid holding the entire list in memory at once, drastically reducing its peak memory footprint.

Pinpointing Memory Leaks with `tracemalloc`

While memory-profiler is excellent for analyzing memory usage within a function’s scope, it’s less suited for finding memory leaks that accumulate over the lifetime of an application. For this, Python’s built-in tracemalloc module is the perfect tool. Available since Python 3.4, tracemalloc can trace every single memory block allocated by Python, telling you exactly where it came from.

The standard workflow for detecting leaks with tracemalloc involves taking snapshots of the memory allocation state at different points in time and comparing them. This allows you to see which allocations are persisting when they should have been cleared.

A Practical `tracemalloc` Workflow

Let’s simulate a common source of memory leaks: a cache or global collection that grows indefinitely.

import tracemalloc

import time

# A global list that will "leak" memory

leaky_data = []

def process_request(data):

# Simulates processing some data and storing it

# The reference is stored globally and never removed

processed_data = data * 10

leaky_data.append(processed_data)

def main():

tracemalloc.start()

# --- First Snapshot ---

print("Taking initial snapshot...")

snapshot1 = tracemalloc.take_snapshot()

# Simulate running the application for a while

for i in range(10000):

process_request(str(i))

# --- Second Snapshot ---

print("Taking final snapshot...")

snapshot2 = tracemalloc.take_snapshot()

tracemalloc.stop()

# --- Compare Snapshots ---

top_stats = snapshot2.compare_to(snapshot1, 'lineno')

print("\n[ Top 10 memory differences ]")

for stat in top_stats[:10]:

print(stat)

if __name__ == "__main__":

main()

Running this script will produce output similar to this:

[ Top 10 memory differences ]

your_script.py:11: size=483 KiB (+483 KiB), count=10000 (+10000), average=49 B

The output is incredibly precise. It tells us that line 11 (leaky_data.append(processed_data)) is responsible for a cumulative increase of 483 KiB across 10,000 new allocations. This immediately points to our leaky_data list as the source of the leak. In a real-world application, this could be a cache with no eviction policy or an event listener that is never deregistered. Because tracemalloc has a non-trivial performance overhead, it’s best used during development and testing rather than in production.

Navigating Performance in Asynchronous Python

The rise of asyncio has revolutionized I/O-bound applications in Python, but it has also introduced new challenges for performance profiling. Traditional profilers like cProfile measure wall-clock time, which is misleading in an async context. A coroutine might take 500ms to complete, but 490ms of that could be spent idly waiting for a network response, with only 10ms of actual CPU work. The real performance killer in an asyncio application is not waiting; it’s *blocking*—code that performs long-running CPU-bound work or synchronous I/O without yielding control back to the event loop.

Using `asyncio` Debug Mode and `py-spy`

The first line of defense is asyncio‘s built-in debug mode. It can automatically log warnings about coroutines that take longer than a certain threshold to execute, which is a strong indicator that they are blocking the event loop.

You can enable it with an environment variable or in your code:

# In your shell

export PYTHONASYNCIODEBUG=1

# Or in your code

import asyncio

# ...

loop = asyncio.get_event_loop()

loop.set_debug(True)

When enabled, you’ll see messages like: Executing <Task ...> took 0.152 seconds. This helps you zero in on problematic coroutines.

For a more powerful and non-intrusive approach, we can turn to py-spy. It’s a sampling profiler written in Rust that can inspect a running Python process from the outside, without requiring any code changes. This makes it safe to use even in production. Its most powerful feature is the ability to generate flame graphs, which are a fantastic way to visualize where time is being spent.

Keeping up with the latest in python news and tooling is essential, and tools like py-spy represent the cutting edge of performance analysis. To use it:

# Install py-spy

pip install py-spy

# Find the Process ID (PID) of your running Python app

# ps aux | grep python

# Profile the running application for 30 seconds and save a flame graph

py-spy record -o profile.svg --pid <YOUR_PID> --duration 30

The resulting SVG file can be opened in a browser. In the flame graph, wide bars represent code that is frequently on the CPU stack. For an asyncio application, you want to see a lot of time spent in the event loop’s selector (e.g., select.select), which indicates it’s efficiently waiting for I/O. If you see a wide bar corresponding to one of your application’s functions, you’ve found a CPU-bound bottleneck that is likely blocking the event loop.

From Profiling to Optimization: Actionable Strategies

Identifying a bottleneck is only half the battle. The next step is to implement effective optimizations. While every situation is unique, several high-level strategies consistently yield the best results.

Algorithm and Data Structure Choice

This is often the most impactful optimization you can make. No amount of micro-optimization can fix a fundamentally inefficient algorithm. Before trying anything else, ask yourself: Is there a better way to structure this problem?

- Searching: Are you repeatedly searching for items in a large list? A linear scan is O(n). Converting the list to a

setor using adictfor lookups can change the operation to O(1), providing a massive speedup for large collections. - Data Processing: Are you loading a 10GB CSV file into a pandas DataFrame to find a single value? Consider processing the file line-by-line or using a database, which is optimized for such queries.

Caching and Memoization

If you have a pure function (one that always returns the same output for a given input) that is computationally expensive, don’t re-calculate the result every time. Cache it. Python’s standard library provides a dead-simple way to do this with the functools.lru_cache decorator.

import functools

import time

@functools.lru_cache(maxsize=None)

def slow_fibonacci(n):

"""A recursive Fibonacci function that is very slow without caching."""

if n < 2:

return n

return slow_fibonacci(n-1) + slow_fibonacci(n-2)

# The first call will be slow as it computes and caches the values.

start_time = time.time()

slow_fibonacci(35)

print(f"First call took: {time.time() - start_time:.4f}s")

# The second call will be nearly instantaneous as it retrieves from the cache.

start_time = time.time()

slow_fibonacci(35)

print(f"Second call took: {time.time() - start_time:.4f}s")

This technique, known as memoization, is incredibly effective for dynamic programming problems, recursive algorithms, and any function that is frequently called with the same arguments.

Conclusion

In this deep dive, we’ve moved beyond the basics of CPU profiling to address the more complex performance challenges that arise in modern Python applications. We’ve learned that effective optimization requires a holistic view, encompassing not just CPU time but also memory usage and asynchronous behavior. By leveraging tools like memory-profiler and tracemalloc, we can systematically hunt down and eliminate memory bloat and leaks. For the asynchronous world, understanding how to use asyncio‘s debug mode and external profilers like py-spy is key to ensuring a responsive, non-blocking application.

The ultimate principle of optimization remains unchanged: measure, don’t guess. Armed with these advanced techniques, you are now better equipped to measure the right things, ask the right questions, and apply targeted, high-impact optimizations. Continue to apply this data-driven approach, and you will be well on your way to building robust, efficient, and scalable Python systems.