Python News: Analyzing the Triumph of PyTorch in the AI Landscape

The PyTorch Ascendancy: A Deep Dive into Python’s Dominant ML Framework

The Python ecosystem is in a constant state of evolution, and nowhere is this more apparent than in the world of artificial intelligence and machine learning. For years, the landscape was dominated by a few key players, but a significant shift has occurred, cementing a new leader in the hearts and minds of developers and researchers. This latest chapter in python news isn’t about a brand-new library, but about the remarkable rise of PyTorch and its journey to becoming the de facto standard for a vast range of AI applications. While TensorFlow, with its powerful Keras API, remains a formidable and excellent tool, the story of PyTorch’s ascent offers profound lessons in framework design, community building, and the importance of a “Pythonic” philosophy.

This article will dissect the technical and strategic reasons behind PyTorch’s widespread adoption. We will explore the core design principles that made it so appealing, demonstrate its power with practical code examples, and analyze its impact on the broader AI ecosystem. Understanding this shift is crucial for any Python developer working in or adjacent to the machine learning field, as it informs not only our choice of tools but also how we approach problem-solving in modern AI.

Section 1: The Rise of PyTorch: A Paradigm Shift in Python ML

To understand why PyTorch captured the community’s enthusiasm, we must first look back at the landscape it entered. The dominant framework at the time, TensorFlow 1.x, was incredibly powerful but came with a steep learning curve and a design philosophy that felt alien to many Python developers.

The Early Days: Static vs. Dynamic Computational Graphs

The core difference that set early PyTorch apart was its use of dynamic computational graphs (a “define-by-run” approach). In contrast, TensorFlow 1.x used static computational graphs (“define-and-run”).

- Static Graphs (TensorFlow 1.x): In this paradigm, you first define the entire architecture of the model—all the operations, inputs, and outputs—as a symbolic graph. This graph is then compiled and optimized. Finally, you feed data into this compiled graph within a special `Session` to get results. While highly efficient for production deployment because the entire computation path is known beforehand, it was notoriously difficult for debugging and experimentation. If you wanted to inspect an intermediate value, you couldn’t simply `print()` it; you had to add a special print operation to the graph and run it through the session. This felt cumbersome and unintuitive.

- Dynamic Graphs (PyTorch): PyTorch took a different approach. The graph is built on-the-fly, as the code executes. Each line of code that performs a tensor operation is immediately evaluated. This “define-by-run” methodology means the control flow of a standard Python script (if-statements, for-loops, etc.) can dynamically alter the graph’s structure. This was a game-changer for researchers and developers. Debugging became as simple as using Python’s standard debugger (`pdb`) or inserting a `print()` statement anywhere in the model’s forward pass.

The “Pythonic” Philosophy

Beyond the graph paradigm, PyTorch was built to feel like a natural extension of Python. It integrated seamlessly with popular libraries like NumPy and behaved as one would expect a Python library to behave. This lowered the cognitive overhead significantly. Instead of learning a new way of thinking about programming (the declarative, graph-building style of TF 1.x), developers could leverage their existing Python skills. This focus on developer experience was a critical factor in its adoption. The framework didn’t just provide a Python API; it embraced the Python ethos of readability, simplicity, and explicit is better than implicit.

Section 2: Technical Deep Dive: Why Developers Flocked to PyTorch

The philosophical appeal of PyTorch was backed by a clean, intuitive, and powerful API. Let’s explore the key technical components that made developers’ lives easier and their research more agile.

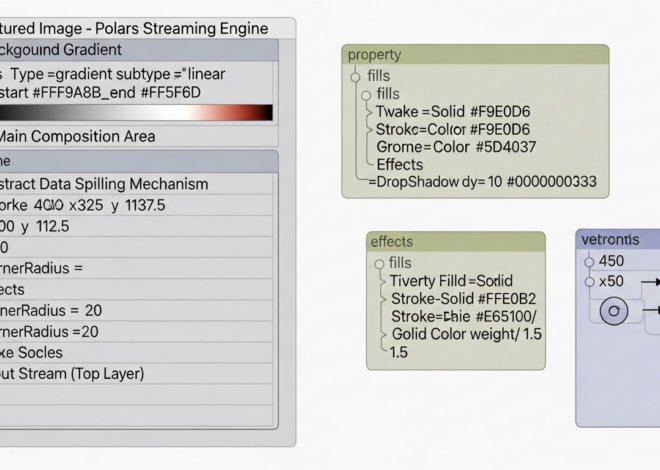

Imperative Programming and Effortless Debugging

PyTorch logo – PyTorch Deep learning Natural-language processing SpaCy Artificial …

The “define-by-run” nature of PyTorch means that building and training a model feels like writing a standard imperative program. The training loop is a simple Python `for` loop, not an abstract concept hidden behind a framework’s API. This makes it incredibly easy to understand, customize, and debug.

Consider a simple linear regression model. The training loop is explicit and transparent.

import torch

import torch.nn as nn

# Sample Data

X = torch.tensor([[1.0], [2.0], [3.0], [4.0]], dtype=torch.float32)

Y = torch.tensor([[2.0], [4.0], [6.0], [8.0]], dtype=torch.float32)

# A simple linear model

class LinearRegression(nn.Module):

def __init__(self):

super(LinearRegression, self).__init__()

self.linear = nn.Linear(1, 1)

def forward(self, x):

return self.linear(x)

model = LinearRegression()

loss_fn = nn.MSELoss()

optimizer = torch.optim.SGD(model.parameters(), lr=0.01)

# Training Loop

for epoch in range(100):

# Forward pass

y_pred = model(X)

loss = loss_fn(y_pred, Y)

# The magic of dynamic graphs: you can inspect any tensor at any time

if epoch % 20 == 0:

print(f"Epoch {epoch}: Loss = {loss.item():.4f}, Weight = {model.linear.weight.item():.4f}")

# Backward pass and optimization

optimizer.zero_grad()

loss.backward()

optimizer.step()

In this example, we can easily print the loss or model weights within the loop. This immediate feedback loop is invaluable for research and development, a stark contrast to the opaque sessions of older frameworks.

The Power and Simplicity of `nn.Module`

PyTorch’s core abstraction for building models is the `nn.Module` class. It provides a clean, object-oriented, and composable way to define any neural network architecture. By subclassing `nn.Module`, you can create custom layers or complex models with ease.

Here’s how you might define a simple Convolutional Neural Network (CNN) for image classification. Notice how the `__init__` method defines the layers, and the `forward` method defines how data flows through them.

import torch.nn as nn

import torch.nn.functional as F

class SimpleCNN(nn.Module):

def __init__(self, num_classes=10):

super(SimpleCNN, self).__init__()

# 1 input image channel, 6 output channels, 5x5 square convolution

self.conv1 = nn.Conv2d(1, 6, 5)

self.pool = nn.MaxPool2d(2, 2)

self.conv2 = nn.Conv2d(6, 16, 5)

# Fully connected layers

self.fc1 = nn.Linear(16 * 4 * 4, 120) # 4*4 is the dimension after conv/pool

self.fc2 = nn.Linear(120, 84)

self.fc3 = nn.Linear(84, num_classes)

def forward(self, x):

# Apply convolutions, activation functions, and pooling

x = self.pool(F.relu(self.conv1(x)))

x = self.pool(F.relu(self.conv2(x)))

# Flatten the tensor for the fully connected layers

x = torch.flatten(x, 1) # flatten all dimensions except batch

x = F.relu(self.fc1(x))

x = F.relu(self.fc2(x))

x = self.fc3(x)

return x

# Instantiate the model

cnn_model = SimpleCNN()

print(cnn_model)

Elegant Data Handling with `Dataset` and `DataLoader`

Machine learning begins with data, and PyTorch provides a robust and intuitive API for handling it. The `torch.utils.data.Dataset` and `torch.utils.data.DataLoader` classes abstract away the complexities of data loading, batching, shuffling, and parallel processing.

You can create a custom `Dataset` for any data source by simply implementing the `__len__` and `__getitem__` methods. The `DataLoader` then wraps your `Dataset` and provides an efficient iterator for your training loop.

from torch.utils.data import Dataset, DataLoader

from PIL import Image

import os

class CustomImageDataset(Dataset):

def __init__(self, annotations_file, img_dir, transform=None):

# In a real scenario, this would load from a CSV or similar

self.img_labels = [("cat.jpg", 0), ("dog.jpg", 1), ("cat2.jpg", 0)] # Dummy data

self.img_dir = img_dir

self.transform = transform

def __len__(self):

return len(self.img_labels)

def __getitem__(self, idx):

img_path = os.path.join(self.img_dir, self.img_labels[idx][0])

# image = read_image(img_path) # Placeholder for actual image loading

image = torch.randn(3, 224, 224) # Dummy image tensor

label = self.img_labels[idx][1]

if self.transform:

image = self.transform(image)

return image, label

# Usage

# Assume 'path/to/images' exists with dummy files

# dataset = CustomImageDataset(annotations_file="labels.csv", img_dir="path/to/images")

# dataloader = DataLoader(dataset, batch_size=32, shuffle=True, num_workers=4)

# In your training loop, you'd simply iterate over the dataloader:

# for images, labels in dataloader:

# # Your training code here...

# pass

Section 3: The Ecosystem Effect: Fostering Community and Innovation

A framework is only as strong as its community and the ecosystem built around it. PyTorch’s developer-friendly nature created a flywheel effect that propelled it to the forefront of AI research and, eventually, production.

A Research-First Mentality

The flexibility of dynamic graphs made PyTorch the darling of the academic and research communities. When developing novel architectures or complex models like those with dynamic inputs (e.g., in NLP), the ability to use standard Python control flow was indispensable. As a result, a vast majority of new research papers began releasing their code in PyTorch. This meant that any practitioner wanting to experiment with state-of-the-art models had to use PyTorch. This pipeline from research to practice rapidly expanded the PyTorch user base.

The Proliferation of High-Level Libraries

PyTorch’s simplicity and “hackability” made it the perfect foundation for other libraries. This led to an explosion of powerful tools that further simplified the ML workflow. The most prominent example is Hugging Face Transformers, which democratized access to large language models and is built almost exclusively on PyTorch. Other key libraries include:

- PyTorch Lightning: A wrapper that organizes PyTorch code and removes boilerplate, allowing developers to focus on the research and not the engineering.

- fast.ai: A library that makes deep learning best practices easily accessible, built on top of PyTorch.

This rich ecosystem became one of PyTorch’s most significant moats. For many use cases, especially in NLP, the path of least resistance now runs directly through the PyTorch ecosystem.

From Research to Production

An early criticism of PyTorch was that it was great for research but not “production-ready” like TensorFlow. However, this has changed dramatically. Tools like TorchScript allow PyTorch models to be serialized into a graph-based format that can be run in high-performance environments (like C++) where Python is not available. Furthermore, TorchServe provides a flexible and easy-to-use tool for deploying PyTorch models at scale. Today, major companies like OpenAI, Tesla, and Microsoft use PyTorch heavily in their production systems, debunking the “research-only” myth.

Section 4: The Modern ML Landscape: PyTorch, TensorFlow 2.x, and JAX

The competitive landscape has forced all frameworks to evolve. The “framework wars” have cooled, leading to a convergence of features, but important distinctions remain.

PyTorch logo – Mastering PyTorch – 100 Days: 100 Projects Bootcamp Training | Udemy

TensorFlow’s Rebirth with Keras and Eager Execution

Credit must be given to the TensorFlow team for recognizing the community’s needs. With TensorFlow 2.x, they made two crucial changes:

- Eager Execution by Default: This is essentially TensorFlow’s version of dynamic graphs, making it behave much more like PyTorch out of the box.

- Keras as the High-Level API: Keras, known for its user-friendly and modular API, became the official high-level interface for TensorFlow.

These changes made TensorFlow significantly more approachable and closed the usability gap with PyTorch. TensorFlow continues to have a very strong production ecosystem with tools like TensorFlow Extended (TFX) for end-to-end ML pipelines and TensorFlow Lite for on-device and edge deployment.

The Rise of JAX: The New Contender

A new player gaining significant traction, especially in the high-performance research community, is Google’s JAX. JAX is not a full-fledged ML framework like PyTorch or TensorFlow. Instead, it is a library for high-performance numerical computing and machine learning research. Its key strength lies in its composable function transformations: `grad` (automatic differentiation), `jit` (just-in-time compilation), and `vmap`/`pmap` (for automatic vectorization and parallelization). For researchers pushing the limits of performance on large-scale models, JAX offers unparalleled speed and flexibility.

Recommendations for Today’s Developer

- PyTorch: The recommended default for most new projects, especially in NLP and computer vision research. Its vast ecosystem, strong community support, and intuitive API make it the fastest way to get from idea to implementation.

- TensorFlow/Keras: An excellent and mature choice, particularly if you are deploying to mobile/edge devices (via TF Lite) or are heavily invested in the Google Cloud ecosystem. Its API is now very polished and user-friendly.

- JAX: The go-to for performance-critical research and when you need to build highly customized computational graphs from scratch. It has a steeper learning curve but offers maximum control and speed.

Conclusion: Lessons from the Pythonic Path

The story of PyTorch’s rise is a pivotal piece of recent python news and a testament to the power of developer experience. By embracing a “Pythonic” philosophy and prioritizing an intuitive, flexible, and debuggable interface, PyTorch won over the research community. This created a virtuous cycle: cutting-edge research was published in PyTorch, which drove practitioners to adopt it, which in turn fostered a rich ecosystem of powerful tools that solidified its position as a leader. While TensorFlow has adapted admirably and JAX pushes the boundaries of performance, the lessons from PyTorch’s success remain clear. In the fast-moving world of AI, the frameworks that empower developers to iterate quickly, debug easily, and stand on the shoulders of a vibrant community will ultimately lead the way.