FastAPI Performance Optimization Strategies

FastAPI has rapidly become a go-to framework for building high-performance APIs with Python, celebrated for its speed, developer-friendly ergonomics, and automatic documentation. Built upon the shoulders of giants like Starlette for its ASGI (Asynchronous Server Gateway Interface) capabilities and Pydantic for data validation, FastAPI offers impressive out-of-the-box performance. However, as applications scale and move into demanding production environments, relying on default configurations is not enough. Achieving peak performance requires a deeper understanding of its asynchronous core and a strategic approach to optimization.

This comprehensive guide moves beyond the basics to explore proven, advanced techniques for optimizing your FastAPI applications. We will dissect common bottlenecks, from database interactions and blocking I/O to server configuration and caching strategies. Whether you’re building a new service from scratch or looking to squeeze more performance out of an existing application, these strategies will provide you with the actionable insights and practical code examples needed to build truly fast, scalable, and resilient APIs. By mastering these principles, you can ensure your application not only meets but exceeds performance expectations under real-world load.

The Foundation: Mastering Async and Await

The cornerstone of FastAPI’s performance is its native support for asynchronous programming via Python’s asyncio library. However, simply adding the async keyword to your path operation functions is not a magic bullet. A misunderstanding of how the event loop works is one of the most common causes of performance degradation in FastAPI applications.

Understanding the Event Loop

At its core, asyncio uses a single-threaded event loop to manage and execute multiple tasks concurrently. When an async function encounters an I/O-bound operation (like a network request or a database query) and uses the await keyword, it yields control back to the event loop. The loop can then run other tasks while waiting for the I/O operation to complete. This cooperative multitasking model allows a single process to handle thousands of concurrent connections efficiently, which is impossible with traditional synchronous code without resorting to multi-threading or multi-processing.

The critical pitfall here is blocking the event loop. If you execute a long-running, synchronous (blocking) function within an async def endpoint, the entire event loop freezes. No other tasks can run, and all other incoming requests will be stalled until the blocking operation finishes. This completely negates the benefits of using an asynchronous framework.

The Golden Rule: async def for I/O, def for CPU

FastAPI is intelligent enough to handle standard synchronous functions (defined with def) differently from asynchronous ones. When you declare a path operation with a regular def, FastAPI runs it in an external thread pool, preventing it from blocking the main event loop. This leads to a simple but powerful rule:

- Use

async defwhen your function performs I/O-bound operations (e.g., calling other APIs, database access, reading files) and you are usingasync-compatible libraries. - Use

defwhen your function is CPU-bound (e.g., complex calculations, data processing, machine learning inference) or when you must use a legacy, synchronous I/O library.

Consider this example of correctly using async def for an I/O-bound task:

import httpx

from fastapi import FastAPI

app = FastAPI()

# Use an async-compatible HTTP client

async_client = httpx.AsyncClient()

@app.get("/call-external-api")

async def get_external_data():

# 'await' yields control to the event loop while waiting for the network response

response = await async_client.get("https://api.example.com/data")

return response.json()Offloading Blocking Code with `run_in_threadpool`

What if you need to use a library that doesn’t support asyncio, such as a traditional database driver or an image processing library like Pillow? You can’t just call its blocking functions from within an async def endpoint. The solution is to explicitly offload the blocking call to a separate thread pool.

FastAPI makes this easy with the run_in_threadpool utility from starlette.concurrency. This function takes a regular synchronous function and its arguments, executes it in a worker thread, and returns an awaitable result.

from fastapi import FastAPI

from starlette.concurrency import run_in_threadpool

import time

app = FastAPI()

def synchronous_blocking_io_call(data: str):

# Simulate a blocking operation, like writing to a file or a legacy DB call

print(f"Starting blocking task for: {data}")

time.sleep(2)

print(f"Finished blocking task for: {data}")

return {"message": f"Processed {data} synchronously"}

@app.get("/process-sync")

async def process_sync_endpoint(data: str = "item1"):

# The event loop is not blocked here.

# FastAPI/Starlette manages running the function in a separate thread.

result = await run_in_threadpool(synchronous_blocking_io_call, data=data)

return result

By using run_in_threadpool, you safely integrate blocking code into your async application, ensuring the event loop remains responsive and can continue serving other requests.

Taming the Database: Your Application’s Slowest Link

For most web applications, the database is the primary performance bottleneck. Optimizing how your FastAPI application communicates with the database is therefore one of the most impactful things you can do. This involves choosing the right tools and implementing best practices for connection management.

Choosing the Right Asynchronous Database Driver

To fully benefit from FastAPI’s async capabilities, you must use a database driver that is compatible with asyncio. Using a synchronous driver (like the standard psycopg2 for PostgreSQL or mysql-connector-python) inside an async def function will block the event loop, creating a severe performance bottleneck.

Here are some popular async drivers for common databases:

- PostgreSQL:

asyncpgis widely considered the fastest and most robust option. - MySQL/MariaDB:

aiomysqlis a solid choice. - MongoDB:

motoris the official async driver. - SQLite:

aiosqliteprovides async support.

Recent Python news highlights the maturation of SQLAlchemy 2.0, which now has first-class support for async operations, allowing you to use its powerful Core and ORM components with these async drivers.

Implementing Asynchronous Connection Pooling

Establishing a new database connection for every incoming request is resource-intensive and slow. It involves network handshakes, authentication, and process allocation on the database server. A connection pool mitigates this by creating and maintaining a set of ready-to-use database connections. When your application needs to talk to the database, it borrows a connection from the pool and returns it when done. This dramatically reduces the latency of database operations.

Here is an example of setting up an asyncpg connection pool and managing its lifecycle with FastAPI’s startup and shutdown events:

import asyncpg

from fastapi import FastAPI, Depends

from contextlib import asynccontextmanager

DB_POOL = None

# Use FastAPI's new lifespan context manager for startup/shutdown events

@asynccontextmanager

async def lifespan(app: FastAPI):

# On startup

global DB_POOL

DB_POOL = await asyncpg.create_pool(

user="user", password="password", database="db", host="127.0.0.1",

min_size=5, max_size=20 # Configure pool size based on expected load

)

print("Database connection pool created.")

yield

# On shutdown

await DB_POOL.close()

print("Database connection pool closed.")

app = FastAPI(lifespan=lifespan)

async def get_db_connection():

async with DB_POOL.acquire() as connection:

yield connection

@app.get("/users/{user_id}")

async def get_user(user_id: int, db: asyncpg.Connection = Depends(get_db_connection)):

user = await db.fetchrow("SELECT * FROM users WHERE id = $1", user_id)

return user

ORM vs. Core: A Performance Trade-off

Object-Relational Mappers (ORMs) like SQLAlchemy ORM provide a high-level, object-oriented abstraction over your database, which can significantly speed up development. However, this convenience can come with a performance cost. The ORM adds a layer of abstraction that might generate less-than-optimal SQL queries or incur overhead from object hydration (converting database rows into Python objects).

- SQLAlchemy ORM: Excellent for developer productivity. Ideal for most CRUD operations. With SQLAlchemy 2.0’s async support, it’s a very viable option.

- SQLAlchemy Core: A lower-level SQL expression language. It gives you more direct control over the SQL being generated, often resulting in more performant queries. It’s a great middle ground between the full ORM and raw SQL.

- Raw SQL (with `asyncpg`): Offers the absolute best performance as there is no abstraction layer. However, it’s more verbose, prone to SQL injection if not handled carefully (use parameterized queries!), and harder to maintain.

Best Practice: Start with the ORM for rapid development. Use a profiling tool to identify slow queries. For those specific, performance-critical endpoints, consider rewriting the queries using SQLAlchemy Core or even raw SQL to achieve maximum performance.

Production-Grade Tuning: Beyond the Code

Writing efficient code is only half the battle. How you deploy and run your FastAPI application has a massive impact on its performance and stability. This section covers the critical aspects of production deployment.

Choosing and Configuring Your ASGI Server

FastAPI is an ASGI application, which means it needs an ASGI server to run. The most common choice is Uvicorn, which is incredibly fast. For production, however, you typically run Uvicorn under a process manager like Gunicorn.

- Uvicorn: A lightning-fast ASGI server built on `uvloop` and `httptools`. It’s perfect for development and can be used in production.

- Gunicorn: A mature, battle-tested process manager for Python web applications. It can manage multiple Uvicorn worker processes, handle graceful restarts, and improve fault tolerance.

A standard production setup uses Gunicorn to manage Uvicorn workers. This allows you to leverage multiple CPU cores on your server.

Example Command:

gunicorn my_app.main:app --workers 4 --worker-class uvicorn.workers.UvicornWorker --bind 0.0.0.0:8000Worker Configuration: The number of workers (--workers or -w) is a critical tuning parameter. A common starting point is (2 * number_of_cpu_cores) + 1. For a 4-core machine, you might start with 9 workers. You should benchmark your specific application under load to find the optimal number.

Implementing Caching Strategies

Caching is a powerful technique to reduce latency and decrease the load on your database and other backend services. By storing the results of expensive operations (like complex database queries or calls to external APIs) in a fast in-memory store, you can serve subsequent requests almost instantaneously.

- In-memory Cache: Simple to implement using a Python dictionary or a library like `cachetools`. The main drawback is that the cache is local to each worker process and is lost on restart.

- Distributed Cache (Redis/Memcached): For multi-worker or multi-server deployments, a distributed cache like Redis is essential. It provides a shared cache accessible by all processes. Libraries like `fastapi-cache2` integrate seamlessly with FastAPI and support backends like Redis.

Here’s a simple example using `fastapi-cache2` with a Redis backend:

from fastapi import FastAPI

from fastapi_cache import FastAPICache

from fastapi_cache.backends.redis import RedisBackend

from fastapi_cache.decorator import cache

from redis import asyncio as aioredis

app = FastAPI()

@app.on_event("startup")

async def startup():

redis = aioredis.from_url("redis://localhost")

FastAPICache.init(RedisBackend(redis), prefix="fastapi-cache")

@app.get("/data")

@cache(expire=60) # Cache the result of this endpoint for 60 seconds

async def get_data():

# Simulate an expensive database query

await asyncio.sleep(2)

return {"message": "This is some expensive data"}

Middleware: Powerful but Use with Caution

FastAPI’s middleware allows you to run code before and after each request is processed. It’s useful for logging, authentication, compression, and adding headers. However, remember that middleware runs on every single request. A poorly written piece of middleware, especially one that performs synchronous blocking I/O, can become a global performance bottleneck, slowing down your entire application. Always ensure your custom middleware is fully asynchronous if it needs to perform any I/O operations.

Measuring for Success: Profiling and Monitoring

You cannot optimize what you cannot measure. Blindly applying “performance tips” without understanding where your application is actually slow is a waste of time. Profiling and monitoring are non-negotiable for serious performance tuning.

Identifying Bottlenecks with Profilers

Profilers are tools that analyze your code’s execution and show you which functions are consuming the most time and resources.

- `py-spy`: A powerful sampling profiler for Python programs. It lets you visualize what your program is spending time on without restarting it or modifying the code. It’s excellent for inspecting live production applications.

- `cProfile`: A built-in Python profiler that provides detailed, deterministic statistics about function calls. It’s more suited for development and benchmarking specific code paths.

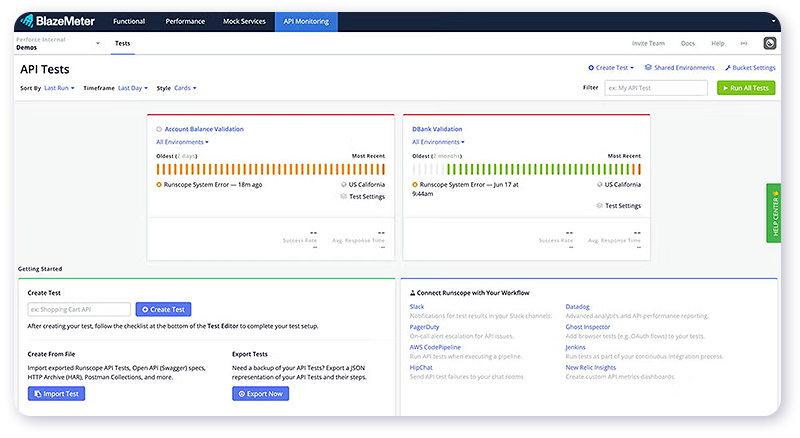

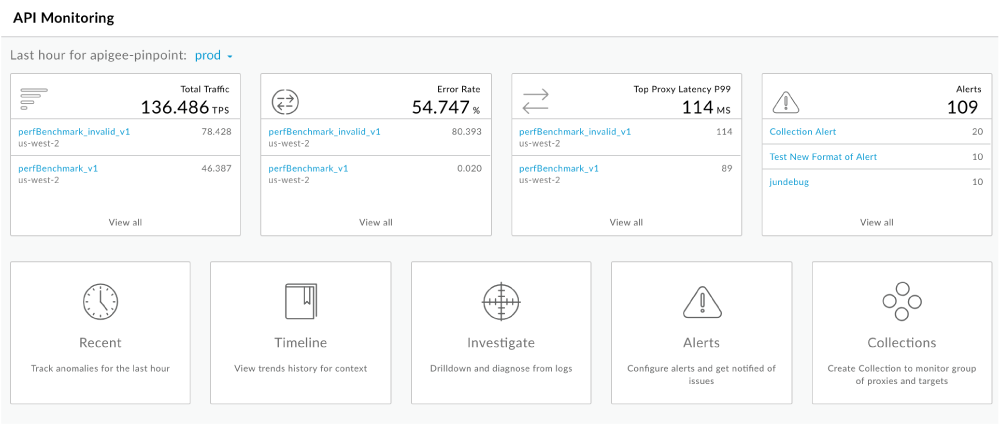

Real-Time Application Performance Monitoring (APM)

In a production environment, you need real-time visibility into your application’s health. Application Performance Monitoring (APM) tools provide this by collecting detailed data on request latency, error rates, database query times, and external API calls. This data is invaluable for pinpointing real-world performance issues that may not appear during local testing.

Popular APM solutions that support FastAPI include:

- OpenTelemetry: An open-source, vendor-neutral standard for observability.

- Datadog, New Relic, Sentry: Commercial platforms that offer comprehensive monitoring and alerting features.

Integrating an APM tool will give you dashboards and traces that can, for example, immediately highlight a specific SQL query that is slowing down an endpoint under load.

Conclusion

FastAPI provides an exceptionally performant foundation for building modern APIs. However, unlocking its full potential requires a deliberate and informed approach to optimization. The journey to a high-performance application begins with a solid mastery of asynchronous programming, ensuring you never block the event loop. From there, focus on the most common bottleneck: the database. By choosing async drivers, implementing connection pooling, and making smart trade-offs between ORMs and lower-level tools, you can achieve significant gains.

Finally, remember that code-level optimizations must be paired with a robust production environment. Properly configuring your ASGI server, implementing a smart caching strategy, and continuously measuring performance with profiling and APM tools are what separate a fast application from a truly scalable and resilient one. By applying these strategies iteratively, you can build FastAPI services that are not only a joy to develop but also capable of handling immense production workloads with speed and efficiency.