Python Data Pipeline Automation – Part 4

Automate your data processing workflows with Python. This guide covers ETL pipelines, data validation, error handling, and monitoring for production data systems. This is part 4 of our comprehensive series covering advanced techniques and practical implementations.

Welcome back to our comprehensive series on Python data pipeline automation. In the previous installments, we laid the groundwork by exploring the fundamentals of ETL (Extract, Transform, Load), building basic pipelines, and scheduling them. Now, we venture into the most critical phase: transforming our development scripts into robust, reliable, and production-ready data systems. This transition is less about writing new code and more about building a resilient architecture around it. A production pipeline must not only work on a “good day” but must also be able to handle failures gracefully, ensure data integrity, and provide clear visibility into its operations.

In this in-depth guide, we will dissect the four pillars of a production-grade data pipeline: advanced orchestration, rigorous data validation, sophisticated error handling, and comprehensive monitoring. We’ll move beyond simple `try…except` blocks and scheduled cron jobs to explore industry-standard tools and best practices that define modern data engineering. By the end of this article, you will have a clear roadmap for elevating your Python data pipelines from functional prototypes to dependable assets that your organization can trust.

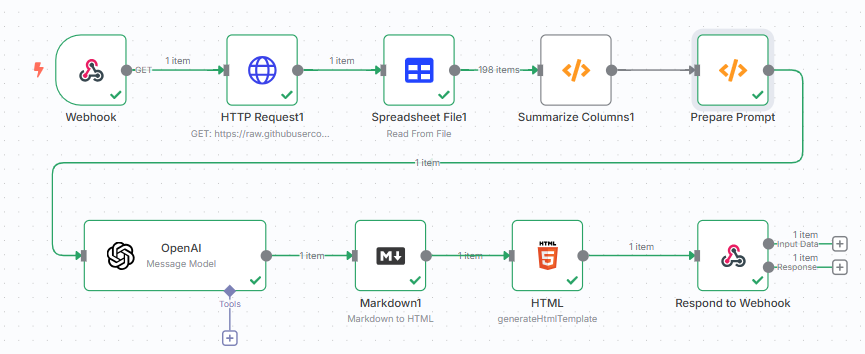

Beyond Simple Scripts: Orchestrating Complex Workflows

A single, monolithic Python script might suffice for a simple, one-off task, but production data workflows are rarely that straightforward. They often consist of multiple interdependent tasks. For example, you might need to fetch data from three different APIs, wait for all three to complete, join the results, validate the combined dataset, and then load it into a data warehouse and a separate caching system. Managing these dependencies, handling partial failures, and re-running specific steps manually is a recipe for disaster. This is where orchestration frameworks come in.

Choosing the Right Orchestration Tool

Orchestration tools allow you to define your workflow as a series of tasks with clear dependencies, typically visualized as a Directed Acyclic Graph (DAG). This provides a structured way to manage execution, retries, and logging. The Python ecosystem offers several powerful options:

- Apache Airflow: For years, Airflow has been the de facto industry standard. It’s incredibly powerful and extensible, with a massive library of pre-built “Operators” to interact with virtually any system (databases, cloud storage, APIs). You define your DAGs in Python scripts, and Airflow’s scheduler and web UI handle the rest. Its maturity is a significant advantage, but it can have a steep learning curve and a more complex setup.

- Prefect: A modern alternative that bills itself as “the new standard in dataflow automation.” Prefect’s core philosophy is more Python-native. You can often convert an existing Python script into a Prefect flow by adding a few decorators. It excels at handling dynamic workflows, where the structure of the DAG might change at runtime, and its local development experience is generally considered smoother than Airflow’s.

- Dagster: Dagster is a “data-aware” orchestrator. It places a strong emphasis on the data assets that your pipeline produces, not just the tasks that run. It has excellent built-in features for local development, testing, and a powerful UI (Dagit) that helps you visualize data lineage and asset health. It’s a fantastic choice for teams that want to build a more holistic and maintainable data platform.

Idempotency: The Cornerstone of Reliability

A critical concept in building reliable pipelines is idempotency. An idempotent operation is one that can be performed multiple times without changing the result beyond the initial application. Why is this so important? Imagine a task that inserts records into a database fails halfway through. If you simply re-run the script, you might end up with duplicate records. An idempotent task would be designed to handle this, for example, by using an `INSERT…ON CONFLICT DO NOTHING` statement or by first deleting the records for that specific batch before inserting.

Consider this simple file-writing example:

# NON-IDEMPOTENT: Running this twice will create duplicate data

def append_data_to_file(file_path, data):

with open(file_path, 'a') as f:

for record in data:

f.write(f"{record}\n")

# IDEMPOTENT: Running this twice produces the same result as running it once

def overwrite_data_to_file(file_path, data):

# This approach overwrites the entire file, ensuring a consistent state

with open(file_path, 'w') as f:

for record in data:

f.write(f"{record}\n")

In a real-world scenario, you would likely use more sophisticated techniques, such as writing to a temporary location and then performing an atomic move/rename operation, or using transactional properties of your target database. Always design your tasks to be re-runnable without causing side effects.

Ensuring Data Integrity with Quality Gates

The “Garbage In, Garbage Out” (GIGO) principle is the bane of data teams. A pipeline that reliably loads incorrect or incomplete data is often more dangerous than one that fails outright. Implementing data validation as a “quality gate” within your pipeline is non-negotiable. This means actively checking your data against a set of predefined rules and expectations before allowing it to proceed to the next stage.

Leveraging Data Validation Frameworks

While you can write custom validation logic with `if` statements and assertions in pandas, this quickly becomes unmanageable. Specialized libraries provide a declarative and maintainable way to define and execute data quality checks.

- Pydantic: Excellent for schema validation, especially when dealing with data from APIs (JSON) or configuration files. It uses Python type hints to validate data structures. If you expect a record to have a specific set of fields with specific data types, Pydantic can enforce that contract at runtime.

- Great Expectations: A comprehensive framework for data quality. Its power lies in its expressive and human-readable “Expectations.” Instead of writing code to check if a column’s values are unique, you simply declare `expect_column_values_to_be_unique`. Great Expectations can automatically generate “Data Docs”—HTML reports on your data quality—and store validation results for tracking quality over time.

Here’s a conceptual example of using Great Expectations to validate a pandas DataFrame:

import pandas as pd

import great_expectations as ge

# Assume 'df' is a pandas DataFrame loaded from your source

# df = pd.read_csv("my_data.csv")

# Convert DataFrame to a Great Expectations object

ge_df = ge.from_pandas(df)

# Define expectations (the "quality gate")

ge_df.expect_column_to_exist("user_id")

ge_df.expect_column_values_to_be_unique("user_id")

ge_df.expect_column_values_to_not_be_null("registration_date")

ge_df.expect_column_values_to_be_between("age", min_value=18, max_value=100)

# Validate the data against the expectations

validation_results = ge_df.validate()

# Programmatically check if the validation was successful

if not validation_results["success"]:

# Halt the pipeline, send an alert, or quarantine the bad data

print("Data validation failed!")

# The results object contains detailed information on which checks failed

print(validation_results)

else:

print("Data validation successful. Proceeding to the next step.")

Implementing Data Contracts

A more advanced concept gaining traction is the data contract. This is a formal, machine-readable agreement between a data producer (e.g., an application’s backend) and a data consumer (your pipeline) that defines the schema, semantics, and quality expectations for a dataset. By establishing a contract, validation can be enforced at the source, preventing bad data from ever entering your pipeline in the first place. This “shift-left” approach to data quality fosters accountability and makes the entire data ecosystem more reliable.

From `try…except` to Resilient Systems

In production, errors are not an “if” but a “when.” A network connection will drop, an API will return a 503 error, or a file will arrive with unexpected formatting. A resilient pipeline anticipates these failures and has a clear strategy for handling them. A simple `try…except Exception:` block that just prints the error is insufficient.

Granular Exception Handling and Retries

Not all errors are created equal. It’s crucial to differentiate between:

- Transient Errors: These are temporary issues, like a network timeout, a temporary API rate limit, or a database deadlock. These errors can often be resolved by simply waiting and retrying the operation.

- Permanent Errors: These are deterministic failures, such as a missing file, a permissions error, or data that fails validation. Retrying these errors will only lead to the same failure.

Your error handling logic should reflect this. For transient errors, a retry strategy with exponential backoff is a best practice. This means the delay between retries increases after each failed attempt, which prevents overwhelming a struggling downstream service. The `tenacity` library in Python makes this incredibly easy to implement.

import requests

from tenacity import retry, stop_after_attempt, wait_exponential

# This function will be retried up to 5 times with exponential backoff

# if it raises an HTTPError. Other exceptions will fail immediately.

@retry(

stop=stop_after_attempt(5),

wait=wait_exponential(multiplier=1, min=4, max=10)

)

def fetch_data_from_flaky_api(api_url):

print("Attempting to fetch data...")

response = requests.get(api_url)

# Raise an exception for bad status codes (4xx or 5xx)

response.raise_for_status()

print("Successfully fetched data!")

return response.json()

try:

data = fetch_data_from_flaky_api("http://httpstat.us/503")

except requests.exceptions.HTTPError as e:

print(f"API call failed permanently after multiple retries: {e}")

Structured Logging for Actionable Insights

When a pipeline fails in the middle of the night, `print()` statements are useless. You need a detailed, searchable log trail to diagnose the problem quickly. Structured logging is the practice of creating logs in a consistent, machine-readable format like JSON, rather than as plain text strings. This allows you to easily ingest logs into platforms like Elasticsearch, Datadog, or Splunk and perform powerful queries.

Your logs should include rich context with every message: the name of the pipeline, the specific task, the run ID, and any relevant data identifiers (like a batch ID or filename). Staying updated with the latest python news and libraries, such as `structlog`, can significantly improve your logging capabilities by making it easy to add this context automatically.

Comprehensive Monitoring and Alerting

Once your pipeline is deployed, your job has just begun. Monitoring provides the visibility needed to ensure it’s operating correctly and efficiently. Without it, you are flying blind. A pipeline could be “failing silently” by processing zero records for days, and you would never know until a business user complains about a stale dashboard.

Key Metrics to Monitor

You should track several categories of metrics to get a holistic view of your pipeline’s health:

- Pipeline Health: Track the status of each run (success, failed). Measure the end-to-end duration of your pipeline; a sudden increase could indicate a performance bottleneck.

- Data Freshness: How long has it been since your target data warehouse or table was last updated? This is a critical Service Level Objective (SLO) for many data products.

- Data Volume: Monitor the number of rows processed or the size of the data ingested. A sudden, drastic drop or spike is a strong indicator of an upstream problem or a bug in your extraction logic.

- Resource Utilization: Keep an eye on the CPU and memory usage of your pipeline jobs. This can help you optimize performance and control cloud infrastructure costs.

Implementing an Actionable Alerting Strategy

Monitoring is passive; alerting is active. An alert should be triggered when a metric crosses a predefined threshold, notifying the on-call data engineer of a potential issue. A good alerting strategy is crucial to avoid “alert fatigue.”

Differentiate between severity levels. A complete pipeline failure at 3 AM should trigger a PagerDuty alert that wakes someone up. A pipeline run that took 20% longer than usual might just generate a warning in a team’s Slack channel for investigation during business hours. Most orchestration tools have built-in integrations for sending alerts to various channels (Email, Slack, PagerDuty, etc.), allowing you to configure these rules directly within your workflow definition.

Conclusion: Building a Mature Data Practice with Python

Transitioning a Python data script into a production-grade pipeline is a significant leap in engineering maturity. It requires a shift in mindset from “does it run?” to “is it reliable, observable, and maintainable?” By embracing robust orchestration tools like Airflow or Prefect, implementing rigorous data quality gates with frameworks like Great Expectations, designing sophisticated error handling with intelligent retries, and building a comprehensive monitoring and alerting system, you create a foundation of trust. These practices ensure that your data systems are not fragile liabilities but resilient, dependable assets that consistently deliver high-quality data to the business, empowering confident, data-driven decisions.