Machine Learning Model Deployment with Python – Part 5

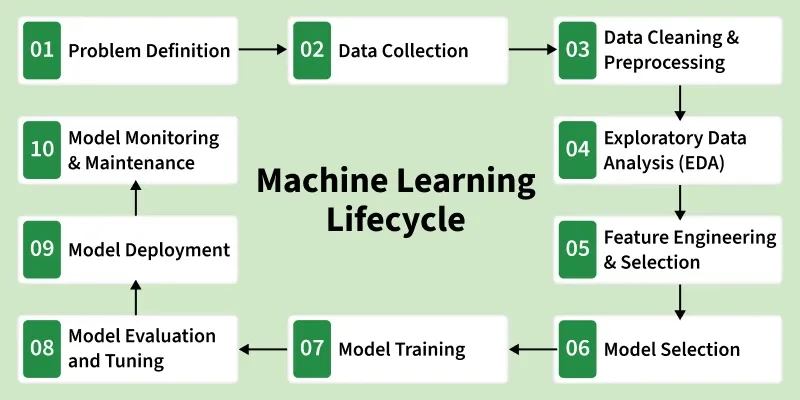

Welcome to the fifth installment of our comprehensive series on deploying machine learning models in production using Python. In the previous parts, we explored the journey from data preparation and model training to initial validation. Now, we venture into the most critical and often most challenging phase: taking a trained model and transforming it into a robust, scalable, and reliable service that delivers real-world value. This is where data science meets software engineering, a domain commonly known as MLOps (Machine Learning Operations).

This step-by-step guide will demystify the advanced techniques and practical implementations required for production-grade deployment. We will cover the essential pillars of modern ML systems: containerization with Docker, creating high-performance APIs for model serving, implementing crucial monitoring strategies to prevent performance degradation, and designing for scale. Whether you’re a data scientist looking to productionize your work or a software engineer tasked with building ML infrastructure, this article provides the in-depth knowledge and actionable code examples needed to succeed. The world of MLOps is constantly evolving, and staying updated with the latest python news and best practices is key to building systems that last.

The Production-Ready Mindset: From Notebooks to Services

The transition from a Jupyter Notebook to a production environment is a significant leap in complexity and required rigor. A model that achieves 95% accuracy in a static, controlled environment is useless if it cannot handle real-world data, respond to requests in milliseconds, or run reliably 24/7. Adopting a production-ready mindset means shifting focus from purely algorithmic performance to system-level attributes like reliability, scalability, maintainability, and observability.

Core Components of a Modern ML Deployment Stack

A typical production ML system built with Python is not a single script but a collection of interconnected components working in harmony. Understanding these pieces is the first step toward building a robust deployment.

- Model Artifacts: This is the trained model itself, often saved (or “pickled”) to a file (e.g.,

model.pkl,model.h5). It also includes any other necessary components like vectorizers, encoders, or configuration files that are essential for the model to make predictions on raw data. - Serving Layer (API): The model needs an interface to the outside world. This is typically a REST API that exposes one or more endpoints (e.g.,

/predict). When the API receives a request with input data, it processes the data, passes it to the model, and returns the prediction. Python frameworks like FastAPI and Flask are the industry standards for this task. - Containerization: To ensure the model and its environment are portable, reproducible, and isolated, we package the entire application (Python code, dependencies, model artifacts) into a container. Docker is the de facto standard for containerization. A container acts as a lightweight, self-contained unit that can run consistently on any machine, from a developer’s laptop to a cloud server.

- Infrastructure and Orchestration: The container needs a place to run. This could be a single virtual machine in the cloud (e.g., an AWS EC2 instance) for simple applications. For more complex, high-availability systems, a container orchestrator like Kubernetes is used to manage, scale, and schedule container workloads across a cluster of machines automatically.

Why This Stack? The Benefits of Decoupling

This component-based architecture is powerful because it decouples the data science work from the operational concerns. Data scientists can focus on improving the model, and when a new version is ready, they simply provide a new model artifact. The engineering team can independently optimize the API, scale the infrastructure, and manage security without needing to understand the intricate details of the machine learning model itself. This separation of concerns is fundamental to building scalable and maintainable MLOps pipelines.

Deep Dive: Containerizing and Serving a Python ML Model

Let’s move from theory to practice. In this section, we’ll walk through the process of taking a trained Scikit-learn model, wrapping it in a FastAPI application, and containerizing it with Docker to create a production-ready prediction service.

Step 1: Building the Prediction API with FastAPI

FastAPI is a modern, high-performance web framework for building APIs with Python 3.7+ based on standard Python type hints. It’s incredibly fast, easy to use, and automatically generates interactive API documentation (via Swagger UI), making it a superb choice for ML model serving.

First, ensure you have the necessary libraries installed:

pip install fastapi uvicorn scikit-learn pydantic joblibLet’s assume we have a simple classification model trained and saved as model.joblib. Our API code, saved in a file named main.py, would look like this:

from fastapi import FastAPI

from pydantic import BaseModel

import joblib

import numpy as np

# 1. Initialize the FastAPI app

app = FastAPI(title="ML Model Deployment with Python", version="1.0")

# 2. Load the trained model artifact

model = joblib.load("model.joblib")

# 3. Define the request body structure using Pydantic

class ModelInput(BaseModel):

feature1: float

feature2: float

feature3: float

feature4: float

# 4. Define the prediction endpoint

@app.post("/predict")

def predict(data: ModelInput):

"""

Takes input data and returns a model prediction.

"""

# Convert input data to a numpy array for the model

input_data = np.array([[data.feature1, data.feature2, data.feature3, data.feature4]])

# Get the prediction from the model

prediction = model.predict(input_data)

probability = model.predict_proba(input_data).max()

# Return the prediction and probability

return {

"prediction": int(prediction[0]),

"probability": float(probability)

}

@app.get("/")

def read_root():

return {"message": "Welcome to the ML Model Prediction API"}

This code defines a /predict endpoint that accepts a POST request with a JSON body containing four features. Pydantic handles data validation automatically, ensuring the input data matches the expected types. The API then uses the loaded model to make a prediction and returns the result.

Step 2: Containerizing the Application with Docker

Now, we’ll create a Dockerfile to package our FastAPI application. This file is a blueprint that tells Docker how to build the image.

# 1. Use an official lightweight Python base image

FROM python:3.9-slim

# 2. Set the working directory inside the container

WORKDIR /app

# 3. Copy the dependency file and install dependencies

COPY requirements.txt .

RUN pip install --no-cache-dir -r requirements.txt

# 4. Copy the application code and model artifact into the container

COPY . .

# 5. Expose the port the app runs on

EXPOSE 8000

# 6. Define the command to run the application using uvicorn

CMD ["uvicorn", "main:app", "--host", "0.0.0.0", "--port", "8000"]

Your requirements.txt file should list all necessary Python packages:

fastapi

uvicorn

scikit-learn==1.2.0 # Pinning versions is crucial for reproducibility!

joblib

numpy

pydantic

To build and run the container, you would execute the following commands in your terminal:

# Build the Docker image

docker build -t ml-prediction-service .

# Run the Docker container

docker run -d -p 8000:8000 --name my-ml-app ml-prediction-service

Your model is now running inside a container, accessible on port 8000 of your local machine. You can send requests to http://localhost:8000/predict and receive predictions. This containerized service is now a portable, reproducible artifact ready for deployment to any cloud provider or on-premise server.

The Day After: Advanced Monitoring and Scaling Strategies

Deploying a model is not the end of the journey; it’s the beginning. Without proper monitoring, a perfectly good model can silently degrade over time, leading to poor business outcomes. This is where MLOps truly shines, providing the tools and practices to ensure long-term model health and performance.

Essential Monitoring for Production ML Systems

Monitoring can be broken down into three critical categories:

- Operational Monitoring: This is standard practice in software engineering. We need to track the health of our service itself. Key metrics include:

- Latency: How long does it take to serve a prediction? Spikes in latency can indicate performance bottlenecks.

- Traffic (QPS): How many queries per second is the service handling?

- Error Rate: What percentage of requests result in errors (e.g., 5xx server errors)?

- Resource Utilization: CPU, memory, and disk usage of the containers.

Tools like Prometheus for metrics collection and Grafana for visualization are the industry standard for this type of monitoring.

- Model Performance Monitoring: This involves tracking the statistical performance of the model on live data. If you have access to ground truth labels (even with a delay), you can calculate metrics like accuracy, precision, recall, or F1-score over time. A sudden drop in these metrics is a clear signal that the model is no longer performing as expected and may need retraining.

- Data and Concept Drift Detection: This is perhaps the most unique and critical aspect of ML monitoring.

- Data Drift: This occurs when the statistical properties of the input data change. For example, a fraud detection model trained on pre-pandemic data might see a completely different distribution of transaction features post-pandemic. The model may still “work,” but its assumptions are no longer valid, leading to degraded performance.

- Concept Drift: This is when the relationship between the input features and the target variable changes. The user behavior itself changes. For instance, what constitutes a “spam” email evolves as spammers change their tactics.

Detecting drift can be done by comparing the distribution of live prediction data against the training data distribution using statistical tests like the Kolmogorov-Smirnov (K-S) test or Population Stability Index (PSI). Open-source Python libraries like Evidently AI and NannyML are specifically designed for this purpose.

Strategies for Scaling Your ML Service

As your application grows, a single container on a single machine won’t be enough. You need a strategy to handle increased load.

- Vertical Scaling: The simplest approach. You give the machine running your container more resources (more CPU, more RAM). This is easy to do but has a hard physical limit and can become very expensive.

- Horizontal Scaling: The preferred cloud-native approach. Instead of making one machine bigger, you add more machines and run multiple identical copies (replicas) of your container. A load balancer distributes incoming traffic across all the replicas. This is highly flexible and cost-effective.

Kubernetes is the ultimate tool for horizontal scaling. It automates the process of deploying, managing, and scaling containerized applications. With a feature called the Horizontal Pod Autoscaler (HPA), Kubernetes can automatically increase or decrease the number of model replicas based on observed metrics like CPU utilization or custom metrics like queries per second. This ensures your service has just enough resources to meet demand without over-provisioning and wasting money.

Choosing Your Path: DIY vs. Managed Platforms

When deploying your Python model, you face a fundamental choice: build your own infrastructure using open-source tools (the DIY approach) or leverage a managed MLOps platform from a cloud provider.

The DIY Approach (Docker, FastAPI, Kubernetes)

- Pros:

- Maximum Control and Flexibility: You have complete control over every component of your stack and can customize it to your exact needs.

- Vendor-Agnostic: Your containerized application can run on any cloud (AWS, GCP, Azure) or even on-premise, avoiding vendor lock-in.

- Cost-Effective at Scale: For large-scale deployments, managing your own Kubernetes cluster can be more cost-effective than paying for managed services.

- Cons:

- High Complexity: Requires significant DevOps and Kubernetes expertise. The learning curve is steep.

- Operational Overhead: Your team is responsible for maintaining, patching, and securing the entire infrastructure.

Managed MLOps Platforms (AWS SageMaker, Vertex AI, Azure ML)

- Pros:

- Speed and Simplicity: These platforms abstract away the underlying infrastructure. You can often deploy a model with a few API calls or clicks in a web UI, drastically reducing time-to-market.

- Integrated Tooling: They provide a fully integrated suite of tools for the entire ML lifecycle, from data labeling and feature stores to model monitoring and CI/CD pipelines.

- Reduced Operational Burden: The cloud provider manages the underlying servers, scaling, and security.

- Cons:

- Potential for Vendor Lock-in: It can be difficult to migrate your MLOps pipelines from one cloud provider to another.

- Less Flexibility: You are often constrained by the platform’s specific way of doing things.

- Cost: The convenience comes at a price, and costs can escalate if not managed carefully.

Recommendation: For small teams or projects where speed is paramount, managed platforms are an excellent choice. For large organizations with dedicated platform engineering teams and a need for maximum flexibility, the DIY approach using Kubernetes often makes more sense in the long run. Keeping up with the latest python news in the MLOps space is crucial, as new tools like BentoML and Seldon Core are emerging to bridge the gap, offering the flexibility of open-source with the ease of use of managed platforms.

Conclusion: Deployment as an Engineering Discipline

Successfully deploying a machine learning model in production is a multifaceted engineering challenge that extends far beyond the initial model training. As we’ve explored in this guide, it requires a robust architecture built on the pillars of containerization, API serving, comprehensive monitoring, and intelligent scaling. By packaging your Python application with Docker, serving it via a high-performance API like FastAPI, and planning for monitoring and scaling from day one, you transform a static model artifact into a dynamic, value-generating service.

Whether you choose a DIY stack with Kubernetes or a managed cloud platform, the core principles remain the same. The goal is to build a system that is not only accurate but also reliable, observable, and maintainable. This shift in mindset—from data scientist to ML systems engineer—is the key to unlocking the true potential of machine learning in real-world applications and ensuring your models deliver sustained impact over time.