Mastering the New Era of Keras: Multi-Backend Workflows and Modern Deep Learning

The landscape of deep learning has undergone a seismic shift with recent updates to the Keras ecosystem. For years, Keras was synonymous with TensorFlow, serving as its high-level API. However, the introduction of Keras 3 (Keras Core) has fundamentally redefined the framework, returning it to its roots as a multi-backend interface while supercharging it with modern capabilities. Today, developers are no longer locked into a single execution engine; instead, they can write code once and run it seamlessly across JAX, TensorFlow, and PyTorch. This interoperability is not just a convenience—it is a strategic advantage in an era where model portability and hardware optimization are paramount.

This evolution comes at a critical time. The Python ecosystem is experiencing a renaissance, from the anticipated GIL removal (Global Interpreter Lock) in upcoming CPython releases to the rise of Rust Python tooling. As data scientists look toward Polars dataframe for faster preprocessing and Mojo language for high-performance compute, Keras has positioned itself as the unifying layer for neural networks. In this comprehensive guide, we will explore the technical depths of the new Keras architecture, distinct from the legacy tf.keras implementation, and demonstrate how to leverage its agnostic capabilities for state-of-the-art machine learning.

Section 1: The Multi-Backend Paradigm and Core Concepts

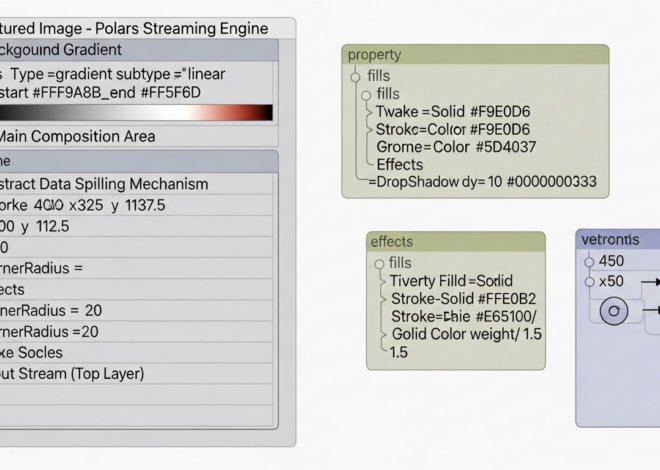

The most significant update in recent Keras history is the decoupling from TensorFlow as the sole backend. The new architecture allows dynamic backend selection. This is achieved through a unified interface, keras.ops, which mimics the NumPy API but works across all supported frameworks. This means operations written in Keras are automatically translated to the native operations of JAX, PyTorch, or TensorFlow at runtime.

This flexibility addresses a major pain point in the industry: fragmentation. Previously, a team using PyTorch news and updates for research had to rewrite models to deploy them in a TensorFlow-heavy production environment. Now, the same model definition works everywhere. Furthermore, this update improves the debugging experience significantly. By using the PyTorch or JAX backends, developers can leverage standard Python debuggers without the complexity of TensorFlow’s graph execution mode, although TensorFlow 2.7 and later versions have made strides in debugging experiences as well.

Setting Up a Backend-Agnostic Workflow

To utilize the multi-backend capabilities, you must configure your environment before importing Keras. This can be done via environment variables or a configuration file. Below is a practical example of how to set up a dynamic environment that switches backends based on availability or preference.

import os

# Set the backend before importing Keras

# Options: "jax", "tensorflow", "torch"

os.environ["KERAS_BACKEND"] = "jax"

import keras

import numpy as np

# Verify the backend

print(f"Running on backend: {keras.backend.backend()}")

# Define a simple agnostic model

def get_model():

inputs = keras.Input(shape=(784,))

x = keras.layers.Dense(64, activation="relu")(inputs)

x = keras.layers.Dense(64, activation="relu")(x)

outputs = keras.layers.Dense(10, activation="softmax")(x)

model = keras.Model(inputs=inputs, outputs=outputs)

return model

# This model is now a JAX model because of the env var

model = get_model()

model.summary()

# Dummy data

x_train = np.random.random((1000, 784))

y_train = np.random.random((1000, 10))

# Compilation works the same regardless of backend

model.compile(

optimizer="adam",

loss="categorical_crossentropy",

metrics=["accuracy"]

)

# Training

model.fit(x_train, y_train, epochs=2, batch_size=32)This code is future-proof. Whether you are integrating with Scikit-learn updates for preprocessing or preparing for Edge AI deployment, the model definition remains constant.

Section 2: Implementation Details – Custom Layers and Ops

The true power of the Keras updates lies in the keras.ops namespace. In previous versions, writing a custom layer often required using tf.math functions, locking the layer to TensorFlow. Now, you can write custom layers using Keras operations that are backend-neutral. This is crucial for researchers developing novel architectures or Algo trading algorithms where custom loss functions and complex tensor manipulations are common.

Moreover, the integration with data pipelines has been smoothed out. You can feed a Keras model with a Polars dataframe converted to NumPy, a DuckDB python query result, or even data processed via Ibis framework. The agnostic nature of Keras handles the tensor conversion under the hood.

Writing a Backend-Agnostic Custom Layer

Let’s implement a custom layer that performs a specialized normalization. Notice the use of keras.ops instead of backend-specific libraries. This ensures that if you switch your stack to utilize PyTorch news features or JAX’s XLA compilation, your custom layer adapts automatically.

import keras

from keras import ops

class AgnosticRMSNorm(keras.layers.Layer):

def __init__(self, epsilon=1e-5, **kwargs):

super().__init__(**kwargs)

self.epsilon = epsilon

def build(self, input_shape):

self.scale = self.add_weight(

shape=(input_shape[-1],),

initializer="ones",

trainable=True,

name="scale"

)

super().build(input_shape)

def call(self, inputs):

# Compute the root mean square

# ops.mean and ops.square work across TF, JAX, and Torch

mean_square = ops.mean(ops.square(inputs), axis=-1, keepdims=True)

# Reciprocal square root

rsqrt = ops.rsqrt(mean_square + self.epsilon)

# Normalize and scale

return inputs * rsqrt * self.scale

def get_config(self):

config = super().get_config()

config.update({"epsilon": self.epsilon})

return config

# Usage

layer = AgnosticRMSNorm()

test_input = ops.convert_to_tensor([[1.0, 2.0, 3.0]])

output = layer(test_input)

print(f"Output shape: {output.shape}")This implementation is cleaner and more portable. It also plays well with modern Python tooling. For instance, you can use Type hints and MyPy updates to ensure your tensor shapes and types are correct during development, a practice that is becoming standard in Python testing.

Section 3: Advanced Techniques – GenAI and Ecosystem Integration

Keras updates have heavily focused on Generative AI. The ecosystem now includes KerasNLP and KerasCV, providing modular components for building Large Language Models (LLMs) and vision transformers. This is particularly relevant given the explosion of Local LLM development and LlamaIndex news regarding retrieval-augmented generation (RAG).

By utilizing KerasNLP, developers can fine-tune massive models like Llama or BERT using LoRA (Low-Rank Adaptation) with minimal code. This integrates seamlessly with LangChain updates, allowing the fine-tuned Keras model to serve as the reasoning engine in a larger chain. Furthermore, for those working in Python finance or Python quantum fields (referencing Qiskit news), the ability to integrate domain-specific tokenizers and embeddings into a standard Keras pipeline simplifies the workflow significantly.

Fine-Tuning an LLM with LoRA

The following example demonstrates how to set up a LoRA fine-tuning task. This approach is highly efficient and can often be run on consumer hardware, bridging the gap between Edge AI and cloud computing.

import keras_nlp

import keras

# Load a pre-trained backbone (e.g., BERT or a smaller GPT variant for demo)

# In a real scenario, this could be Llama 2 or Mistral

backbone = keras_nlp.models.BertBackbone.from_preset("bert_tiny_en_uncased")

# Enable LoRA on the backbone

# This freezes the main weights and only trains the rank-decomposition matrices

backbone.enable_lora(rank=4)

# Build the classifier

preprocessor = keras_nlp.models.BertPreprocessor.from_preset("bert_tiny_en_uncased")

inputs = keras.Input(shape=(), dtype="string", name="inputs")

x = preprocessor(inputs)

x = backbone(x)

outputs = keras.layers.Dense(2, activation="softmax")(x["pooled_output"])

model = keras.Model(inputs, outputs)

# Compile with a modern optimizer

model.compile(

loss="sparse_categorical_crossentropy",

optimizer=keras.optimizers.AdamW(learning_rate=5e-5),

metrics=["accuracy"],

)

# Summary will show significantly fewer trainable parameters

model.summary()

# Mock training data - perhaps scraped using Scrapy updates or Playwright python

import numpy as np

train_data = ["This is great news", "Market is crashing", "Buy the dip", "Sell now"]

train_labels = np.array([1, 0, 1, 0])

# Train

model.fit(train_data, train_labels, epochs=1)This workflow allows for rapid experimentation. You can visualize training progress using Marimo notebooks (a modern reactive notebook alternative) or integrate the training loop into a FastAPI news-compliant microservice using Litestar framework or Django async capabilities for serving predictions.

Section 4: Best Practices, Tooling, and Optimization

Adopting the new Keras requires more than just code changes; it requires an update to your entire development lifecycle. With the introduction of tools like the Uv installer and Rye manager, Python package management is becoming faster and more reliable. When building Keras applications, it is recommended to use Hatch build or PDM manager to handle dependencies, ensuring that your specific backend versions (JAX vs Torch) do not conflict.

Performance and Safety

Performance optimization in Keras now leans heavily on XLA (Accelerated Linear Algebra). Regardless of the backend, enabling XLA compilation (jit_compile=True in model.compile) can drastically improve execution speed. This is similar to the goals of MicroPython updates and CircuitPython news—getting the most out of limited hardware resources.

Security is another critical aspect. With the rise of model sharing, Python security and Malware analysis on serialized models have become hot topics. Always ensure you are loading models from trusted sources. Tools like SonarLint python and Ruff linter should be integrated into your CI/CD pipeline to catch potential vulnerabilities and enforce coding standards like Black formatter style.

Testing and Validation

For robust applications, especially in Python automation or critical infrastructure, unit testing your models is non-negotiable. Use Pytest plugins specifically designed for tensor validation. Here is how you might structure a test for the custom layer we created earlier.

import pytest

import numpy as np

import keras

from keras import ops

def test_agnostic_rms_norm():

# Initialize layer

layer = AgnosticRMSNorm()

# Create deterministic input

input_data = np.array([[1.0, 1.0, 1.0]], dtype="float32")

# Run forward pass

output = layer(input_data)

# Check output shape

assert output.shape == (1, 3)

# Check values (RMS of [1,1,1] is 1, so output should be close to input)

# Using ops.convert_to_numpy for safe assertion

np.testing.assert_allclose(

ops.convert_to_numpy(output),

input_data,

rtol=1e-5

)

if __name__ == "__main__":

test_agnostic_rms_norm()

print("Test passed!")This testing approach ensures that your model components behave correctly regardless of whether the backend is set to JAX, TensorFlow, or PyTorch. It is also compatible with Selenium news or Playwright python workflows if you are testing end-to-end web applications that utilize Keras.js or server-side inference via PyScript web interfaces.

Conclusion

The updates to Keras represent a maturity milestone for the Python deep learning ecosystem. By breaking the dependency on a single backend, Keras has democratized access to high-performance computing features found in JAX and the research flexibility of PyTorch. Whether you are building Reflex app interfaces for AI models, developing Flet ui dashboards for data visualization, or deploying high-frequency Algo trading bots, the new Keras offers a unified, efficient, and future-proof API.

As the ecosystem continues to evolve with PyArrow updates for data transport and Taipy news for front-end generation, mastering the multi-backend Keras workflow will be an essential skill. The convergence of Free threading in Python and agnostic deep learning frameworks signals a new era of performance and developer productivity. Now is the time to migrate legacy tf.keras codebases and embrace the flexibility of Keras Core.