Python Data Pipeline Automation – Part 5

Welcome to the fifth installment of our comprehensive series on Python data pipeline automation. In the previous parts, we laid the groundwork by exploring the fundamentals of data extraction, basic transformations, and workflow orchestration. Now, we elevate our discussion from functional scripts to truly production-grade, resilient, and observable data systems. This guide is designed to equip you with the advanced techniques and practical implementations necessary to automate your data processing workflows with confidence.

In the world of production data engineering, a pipeline that simply “runs” is not enough. A robust pipeline must be reliable, transparent, and capable of handling the inevitable chaos of real-world data and infrastructure. This article delves into the critical pillars that support such systems: sophisticated ETL (Extract, Transform, Load) patterns, rigorous data validation, intelligent error handling, and comprehensive monitoring. We will move beyond simple `try/except` blocks and into strategies like automatic retries and dead-letter queues. We’ll replace manual data checks with automated validation frameworks. By the end of this deep dive, you’ll understand how to build Python data pipelines that not only process data correctly but also maintain their integrity and performance under pressure, ensuring the data delivered is timely, accurate, and trustworthy.

The Anatomy of a Production-Ready Python Data Pipeline

A production-grade data pipeline is far more than a linear script that executes from top to bottom. It’s a well-architected system composed of distinct, interacting components, each with a specific responsibility. Understanding this anatomy is the first step toward building resilient and maintainable workflows. These core components work in concert to ensure data flows smoothly, errors are managed gracefully, and the entire process is observable.

Extract, Transform, Load (ETL): The Core Engine

While ETL is a fundamental concept, its implementation in a production environment is significantly more complex than in a simple script. Each stage demands careful consideration for scalability, efficiency, and reliability.

- Extract: In production, data sources are rarely a single, clean CSV file. Extraction involves connecting to a variety of sources, such as transactional databases (like PostgreSQL or MySQL), streaming platforms (Apache Kafka, AWS Kinesis), third-party APIs with rate limits, or large-scale storage systems like data lakes (Amazon S3, Google Cloud Storage). The extraction logic must handle authentication, pagination, network latency, and source schema changes gracefully.

- Transform: This is where the primary business logic resides. Production transformations go beyond simple filtering or column renaming. They often involve complex operations like joining disparate datasets, enriching data with external lookups, performing time-series analysis with window functions, or applying machine learning models for inference. Efficiency is paramount here; using vectorized operations with libraries like Pandas or, for larger-than-memory datasets, leveraging the power of Polars or Dask is critical.

- Load: The final stage involves loading the processed data into a target system. This could be a data warehouse (Snowflake, Google BigQuery), a NoSQL database, or another storage system. Production loading requires strategies for handling duplicates and updates. This is often achieved through “upsert” (update or insert) logic and designing idempotent load tasks, ensuring that re-running a failed load operation doesn’t create duplicate records or corrupt the target system.

Data Validation: The Gatekeeper of Quality

The principle of “Garbage In, Garbage Out” (GIGO) is the bane of data teams. A pipeline that processes faulty data is not just useless; it’s dangerous, as it can lead to flawed business decisions. Data validation acts as the gatekeeper, ensuring that data meets expected quality standards at various stages of the pipeline. Effective validation checks for:

- Schema Conformance: Are all the required columns present? Are their data types correct (e.g., `int`, `string`, `datetime`)?

- Data Integrity: Are there unexpected `NULL` values in critical columns? Are unique keys actually unique? Do foreign keys correctly reference existing records?

- Value Constraints: Do numerical values fall within an expected range (e.g., age between 0 and 120)? Do categorical string values belong to a predefined set (e.g., status in `[“pending”, “approved”, “rejected”]`)?

Automating these checks with dedicated libraries prevents bad data from propagating downstream, saving countless hours of debugging and data restatement.

Error Handling and Monitoring: The Safety Net and Watchtower

In a production environment, failures are not an “if” but a “when.” A pipeline will eventually encounter network timeouts, API outages, malformed data, or resource constraints. A robust pipeline anticipates these failures and handles them intelligently. This involves moving beyond a simple `print(“Error!”)` to a sophisticated strategy that includes automatic retries for transient issues, quarantining unprocessable data in a “dead-letter queue” for later analysis, and sending clear, actionable alerts to the engineering team. Closely tied to this is monitoring. You cannot manage what you cannot see. Monitoring provides the necessary visibility into the pipeline’s health through structured logging, performance metrics (e.g., execution time, records processed), and dashboards, allowing you to proactively identify bottlenecks and anomalies before they become critical failures.

Advanced ETL and Data Validation in Practice

Let’s move from theory to practical application. Building a robust pipeline requires writing code that is not only correct but also defensive and explicit about its expectations. Here, we’ll explore how to implement a more complex transformation and then enforce data quality using a powerful Python library.

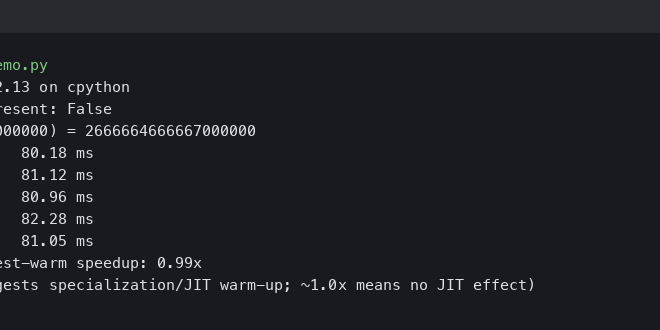

Implementing Complex Transformations with Polars

While Pandas is a fantastic tool, the Python data ecosystem is always evolving. For performance-critical applications or datasets that are pushing the limits of your machine’s memory, Polars is an excellent, high-performance alternative written in Rust. It leverages all available CPU cores and uses a lazy evaluation engine to optimize queries. Let’s consider a scenario where we need to process user session data to calculate the duration of each session and identify the primary action taken.

Imagine we have a DataFrame of user events, sorted by user and timestamp:

import polars as pl

from datetime import datetime

# Sample data representing user events

data = {

'user_id': [1, 1, 1, 2, 2, 1],

'event_timestamp': [

datetime(2023, 10, 26, 10, 0, 0),

datetime(2023, 10, 26, 10, 2, 30),

datetime(2023, 10, 26, 10, 5, 0),

datetime(2023, 10, 26, 11, 0, 0),

datetime(2023, 10, 26, 11, 3, 0),

datetime(2023, 10, 26, 12, 0, 0), # New session for user 1

],

'event_type': ['login', 'view_page', 'add_to_cart', 'login', 'view_page', 'login']

}

events_df = pl.DataFrame(data)

# A session is defined as a series of events by a user

# where the time between consecutive events is less than 30 minutes.

SESSION_TIMEOUT_MINUTES = 30

# Use window functions to calculate time difference and identify session starts

sessions_df = (

events_df

.sort('user_id', 'event_timestamp')

.with_columns(

# Calculate time difference from the PREVIOUS event for the same user

time_diff_seconds = pl.col('event_timestamp')

.diff()

.over('user_id')

.dt.total_seconds()

.fill_null(0) # The first event for a user has no preceding event

)

.with_columns(

# A new session starts if the time diff is > timeout or it's the first event

is_new_session = (pl.col('time_diff_seconds') > (SESSION_TIMEOUT_MINUTES * 60)) | (pl.col('time_diff_seconds') == 0)

)

.with_columns(

# Create a session ID by doing a cumulative sum over the is_new_session flag

session_id_group = pl.col('is_new_session').cumsum().over('user_id')

)

.with_columns(

# Create a globally unique session ID

session_id = pl.concat_str([pl.col('user_id'), pl.col('session_id_group')], separator='-')

)

)

print(sessions_df)

This example demonstrates the power of window functions (`.over()`) in Polars to perform complex stateful analysis—something that is crucial for many real-world data transformations.

Automating Data Validation with `pandera`

Once data is transformed, how do we ensure its quality before loading it? Manually writing dozens of `assert` statements is brittle and hard to maintain. This is where a data validation framework like `pandera` becomes invaluable. It allows you to define a declarative, readable schema for your DataFrames.

Let’s define a schema for our processed `sessions_df` data (we’ll use the pandas equivalent for this example, as `pandera`’s primary integration is with pandas).

import pandas as pd

import pandera as pa

from pandera.errors import SchemaError

# Assume 'sessions_df' is now a pandas DataFrame with the same logic

# sessions_df = sessions_df.to_pandas()

# Define a pandera schema to validate the output

schema = pa.DataFrameSchema({

"user_id": pa.Column(int, pa.Check.gt(0), description="User ID must be a positive integer"),

"event_timestamp": pa.Column(pd.Timestamp, nullable=False, description="Timestamp of the event"),

"event_type": pa.Column(str, pa.Check.isin(["login", "view_page", "add_to_cart", "purchase"])),

"session_id": pa.Column(str, pa.Check.str_startswith("1-") | pa.Check.str_startswith("2-"), nullable=False),

"is_new_session": pa.Column(bool),

})

# Let's create a dataframe with bad data to see validation fail

bad_data = sessions_df.to_pandas().copy()

bad_data.loc[1, 'user_id'] = -50 # Invalid user ID

bad_data.loc[2, 'event_type'] = 'browse_item' # Not in the allowed set

try:

# The validate() method checks the DataFrame against the schema

validated_df = schema.validate(bad_data, lazy=True)

print("Data validation successful!")

except SchemaError as err:

print("Data validation failed!")

# err.failure_cases is a DataFrame detailing the errors

print(err.failure_cases)

By integrating this validation step into your pipeline, you create an automated quality gate. If the incoming data ever deviates from the expected structure or quality, the pipeline fails immediately and provides a detailed report of what went wrong (`err.failure_cases`), preventing corrupted data from reaching your data warehouse and alerting you to the root cause.

Robust Error Handling and Monitoring Strategies

A pipeline that runs successfully in a developer’s pristine environment can quickly crumble under the pressures of production. Network glitches, API rate limits, and corrupted data are daily realities. Building robust error handling and monitoring is not a luxury; it is a core requirement for a reliable data platform.

Building a Resilient Error Handling System

A resilient system anticipates failures and has a clear plan for how to respond. This means distinguishing between different types of errors and applying the appropriate strategy.

Automatic Retries with Exponential Backoff

Many failures are transient. A database might be temporarily overloaded, or a network connection might drop for a second. For these issues, an immediate failure is premature. The best approach is to retry the operation. However, retrying immediately can make the problem worse (a “thundering herd” problem). The solution is **exponential backoff**: retrying after a short delay, and then doubling the delay after each subsequent failure.

Here’s a Python decorator that implements this logic:

import time

import random

import logging

def retry_with_exponential_backoff(retries=5, initial_delay=1, backoff_factor=2):

"""

A decorator to retry a function with exponential backoff.

"""

def decorator(func):

def wrapper(*args, **kwargs):

attempts = 0

delay = initial_delay

while attempts < retries:

try:

return func(*args, **kwargs)

except Exception as e:

attempts += 1

if attempts >= retries:

logging.error(f"Function {func.__name__} failed after {retries} retries. Error: {e}")

raise

logging.warning(f"Attempt {attempts}/{retries} failed for {func.__name__}. Retrying in {delay:.2f} seconds. Error: {e}")

time.sleep(delay)

delay = (delay * backoff_factor) + (random.uniform(0, 1)) # Add jitter

return wrapper

return decorator

@retry_with_exponential_backoff(retries=3, initial_delay=2)

def fetch_data_from_flaky_api(api_url: str):

# This function simulates fetching data from an API that might fail

print("Attempting to fetch data...")

if random.random() < 0.7: # 70% chance of failure

raise ConnectionError("API is unavailable")

print("Successfully fetched data!")

return {"data": "some important payload"}

# Example usage:

fetch_data_from_flaky_api("http://example.com/api/data")

Dead-Letter Queues (DLQ) for Unrecoverable Data

Some data is fundamentally broken—a JSON record with a missing closing bracket, or a row that fails a critical validation rule. Retrying will never fix this. Instead of failing the entire pipeline run, which might block thousands of other valid records, we can isolate the problematic data. A **Dead-Letter Queue (DLQ)** is a destination for these unprocessable records. It could be a separate S3 bucket, a database table, or a message queue topic. This strategy allows the main pipeline to continue while preserving the failed data for manual inspection and potential reprocessing later.

Conceptual logic within a processing loop:

def process_records(records: list):

for record in records:

try:

# 1. Validate the record

validated_record = validate(record)

# 2. Transform the record

transformed_record = transform(validated_record)

# 3. Load the record

load_to_warehouse(transformed_record)

except Exception as e:

# If any step fails, send to DLQ

logging.error(f"Failed to process record: {record}. Error: {e}")

send_to_dlq({"record": record, "error": str(e)})

Comprehensive Monitoring and Logging

If a pipeline fails in the middle of the night, you need to know why, and you need the information to be clear and searchable. This is where structured logging and metrics come in.

Structured Logging for Better Analysis

Instead of logging plain text strings, structured logs (usually in JSON format) emit log records with key-value pairs. This makes them machine-readable and easy to ingest, search, and analyze in tools like Datadog, Splunk, or the ELK Stack.

import logging

import sys

from pythonjsonlogger import jsonlogger

# Configure a logger to output JSON

log = logging.getLogger()

log.setLevel(logging.INFO)

handler = logging.StreamHandler(sys.stdout)

formatter = jsonlogger.JsonFormatter('%(asctime)s %(name)s %(levelname)s %(message)s')

handler.setFormatter(formatter)

log.addHandler(handler)

# Example of structured logging in a pipeline task

def process_data(batch_id: str, record_count: int):

log.info(

"Starting data processing batch.",

extra={'batch_id': batch_id, 'record_count': record_count}

)

# ... processing logic ...

log.info(

"Finished data processing batch.",

extra={'batch_id': batch_id, 'status': 'success'}

)

process_data("batch-abc-123", 5000)

# Output will be a JSON object:

# {"asctime": "...", "name": "root", "levelname": "INFO", "message": "Starting data processing batch.", "batch_id": "batch-abc-123", "record_count": 5000}

Choosing the Right Tools and Best Practices

The Python ecosystem offers a rich set of tools for building data pipelines. Choosing the right ones depends on your team’s expertise, the scale of your data, and your operational requirements. Along with tools, adhering to software engineering best practices is non-negotiable for building maintainable systems.

The Python Data Ecosystem: Tools of the Trade

- Orchestrators: These tools manage the scheduling, execution, and dependency management of your pipeline tasks (DAGs – Directed Acyclic Graphs).

- Apache Airflow: The long-standing industry standard. It’s incredibly powerful and extensible but can have a steeper learning curve and more operational overhead.

- Prefect: Known for its developer-friendly API and dynamic, code-first approach. It simplifies the creation of complex, conditional workflows.

- Dagster: A data-aware orchestrator that emphasizes data assets—the actual tables and files your pipeline produces. It has excellent built-in features for data quality, cataloging, and observability.

- Data Processing: The libraries that do the heavy lifting of transformation.

- Pandas: The default choice for in-memory data manipulation. Perfect for datasets that comfortably fit on a single machine.

- Polars: A high-performance, Rust-based DataFrame library that is quickly gaining popularity. It’s a great choice when Pandas starts to feel slow.

- PySpark: The Python API for Apache Spark. This is the tool for “big data” that requires a distributed computing cluster to process.

Production Pipeline Best Practices

Regardless of the tools you choose, these principles are universal for building high-quality data pipelines:

- Idempotency: Design your tasks so that running them multiple times with the same input produces the exact same result. This is crucial for safely re-running failed pipelines. For example, instead of `INSERT`, use `INSERT…ON CONFLICT UPDATE` or load data to a staging table and then perform a `MERGE` operation.

- Configuration Management: Never hardcode credentials, file paths, or server names in your code. Use environment variables or dedicated configuration files (`.ini`, `.yaml`). This makes your pipeline portable across different environments (dev, staging, prod).

- Dependency Management: Pin your Python dependencies using a `requirements.txt` or, even better, a `pyproject.toml` file with a lock file (like `poetry.lock`). This ensures that your pipeline runs with the exact same library versions everywhere, avoiding the “it works on my machine” problem.

- Comprehensive Testing: Your pipeline code is software. It needs tests. Write unit tests for your transformation logic (e.g., does your sessionization function handle edge cases correctly?) and integration tests to ensure the different components of your pipeline work together as expected.

- CI/CD Integration: Automate the testing and deployment of your pipeline code using a CI/CD system like GitHub Actions or Jenkins. Every code change should automatically trigger tests, and merging to the main branch should deploy the new pipeline version. Staying up-to-date with the latest **python news** and CI/CD practices is essential for maintaining a modern data stack.

Conclusion

Transitioning a Python data script into a production-grade automated pipeline is a significant leap, but one that is essential for any data-driven organization. As we’ve explored, this evolution hinges on a holistic approach that extends far beyond the core transformation logic. By systematically implementing rigorous data validation, building resilient and intelligent error-handling mechanisms, and ensuring comprehensive observability through monitoring and structured logging, you create a system that is not just functional but also trustworthy and maintainable.

The principles of idempotency, configuration management, and automated testing are the software engineering bedrock that supports these systems. By embracing these advanced techniques and best practices, you empower your team to deliver high-quality, reliable data with confidence, transforming your data pipelines from brittle scripts into robust, automated assets that drive real business value. The journey to mastering data pipeline automation is continuous, and these pillars will serve as your guide to building systems that stand the test of time and scale.