Machine Learning Model Deployment with Python

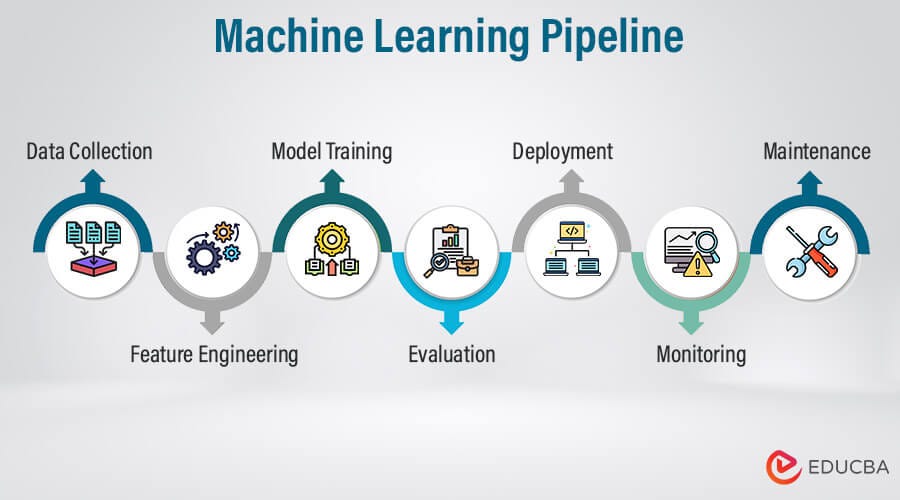

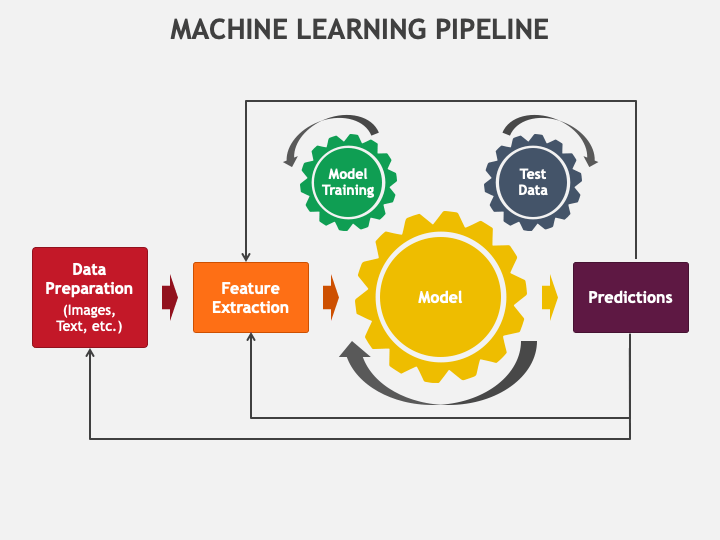

Creating a machine learning model that performs well on a validation dataset is a significant achievement, but it’s only half the battle. The true value of a model is unlocked only when it is successfully deployed into a production environment, where it can make predictions on new, real-world data and drive business decisions. This process, known as model deployment, is often the most challenging and overlooked phase of the machine learning lifecycle. It transforms a static, trained artifact from a data scientist’s notebook into a dynamic, reliable, and scalable service.

This guide provides a comprehensive, step-by-step walkthrough of deploying machine learning models using Python, the lingua franca of data science. We will explore the entire journey, from preparing your model for production to containerizing it for consistency, serving it via a web API, and implementing strategies for monitoring and scaling. Whether you’re a data scientist looking to productionize your first model or an engineer tasked with building ML infrastructure, this article will equip you with the foundational knowledge and practical techniques needed to bridge the gap between research and real-world application. We’ll delve into best practices and essential tools that are shaping the modern MLOps landscape.

Understanding the Foundations of ML Deployment

Before diving into code and tools, it’s crucial to understand what deployment entails and why it presents a unique set of challenges. At its core, deployment is an engineering discipline that requires a different mindset than model training. It’s about building robust, efficient, and maintainable systems.

What is Model Deployment?

Model deployment is the process of integrating a machine learning model into an existing production environment to make its predictions available to users or other systems. This environment could be a web application, a mobile app, an internal dashboard, or part of a larger data processing pipeline. The goal is to create a seamless and reliable pathway for live data to be fed into the model and for the model’s output (the prediction) to be returned in a useful and timely manner.

There are several common deployment patterns:

- Real-time (Online) Inference: The model is exposed as an API endpoint. Applications send a request with a single data point (or a small batch) and receive a prediction in real-time. This is used for applications like fraud detection, product recommendations, and dynamic pricing.

- Batch (Offline) Prediction: The model runs on a large collection of data at a scheduled interval (e.g., hourly or daily). The predictions are then stored in a database for later use. This is common for tasks like customer segmentation, churn prediction, and sales forecasting.

- Edge Deployment: The model is deployed directly onto a user’s device, such as a smartphone or an IoT sensor. This reduces latency and allows for offline functionality but presents challenges in model size and updates. This guide focuses primarily on the server-side patterns of real-time and batch deployment.

Why is Deployment So Challenging?

The transition from a development environment (like a Jupyter notebook) to a production server is fraught with potential issues. The “it works on my machine” problem is especially prevalent in machine learning.

- Environment Inconsistency: A data scientist’s laptop may have different operating systems, library versions (e.g., scikit-learn, TensorFlow), and Python versions than the production server. These discrepancies can cause the model to fail or produce different results.

- Dependency Management: ML projects often have a complex web of dependencies. Ensuring that the exact versions of all required packages are installed in production is critical for reproducibility.

- Scalability and Performance: A model must respond quickly (low latency) and handle a high volume of requests (high throughput). Code that is acceptable for training may be too slow for real-time inference.

- Robustness and Monitoring: Production systems must be resilient. What happens if the model receives unexpected data? How do you know if the model’s performance is degrading over time? Without proper logging, monitoring, and error handling, a deployed model is a black box waiting to fail.

The Core Deployment Workflow: A Step-by-Step Guide

Let’s walk through the practical steps of taking a trained model and turning it into a deployable web service. We’ll use a simple scikit-learn model as an example, but the principles apply to models from any framework.

Step 1: Model Serialization

First, you need to save your trained model to a file. This process is called serialization. It converts your Python model object into a format that can be stored on disk and later reloaded into memory without needing to be retrained.

For scikit-learn and other non-deep-learning models, the most common libraries for this are pickle and joblib. joblib is often preferred as it is more efficient for objects that contain large NumPy arrays, which are common in scikit-learn.

# In your training script (e.g., train.py)

import pandas as pd

from sklearn.ensemble import RandomForestClassifier

from sklearn.model_selection import train_test_split

import joblib

# 1. Load your data

df = pd.read_csv('iris.csv')

X = df[['sepal.length', 'sepal.width', 'petal.length', 'petal.width']]

y = df['variety']

# 2. Split data and train a model

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3, random_state=42)

model = RandomForestClassifier(n_estimators=100, random_state=42)

model.fit(X_train, y_train)

print(f"Model Accuracy: {model.score(X_test, y_test)}")

# 3. Serialize and save the trained model

joblib.dump(model, 'model.pkl')

print("Model saved to model.pkl")

Step 2: Building a Prediction Service with FastAPI

Next, we need to wrap our model in a web service that can accept HTTP requests. While Flask is a popular choice, FastAPI has become the modern standard for building high-performance APIs in Python. It offers automatic data validation, interactive API documentation (via Swagger UI), and is built on modern Python features like type hints and async/await.

Create a file named main.py:

# In your API script (e.g., main.py)

from fastapi import FastAPI

from pydantic import BaseModel

import joblib

import numpy as np

# 1. Initialize the FastAPI app

app = FastAPI(title="Iris Species Predictor API")

# 2. Load the serialized model

model = joblib.load('model.pkl')

# 3. Define the input data model using Pydantic

# This provides data validation and type hints

class IrisFeatures(BaseModel):

sepal_length: float

sepal_width: float

petal_length: float

petal_width: float

# 4. Create the prediction endpoint

@app.post("/predict")

def predict_species(features: IrisFeatures):

"""

Accepts iris features and returns the predicted species.

"""

# Convert input data to a NumPy array for the model

data = np.array([[

features.sepal_length,

features.sepal_width,

features.petal_length,

features.petal_width

]])

# Make a prediction

prediction = model.predict(data)

probability = model.predict_proba(data).max()

return {

"predicted_species": prediction[0],

"confidence_score": float(probability)

}

@app.get("/")

def read_root():

return {"message": "Welcome to the Iris Predictor API"}

To run this service locally, you’ll need an ASGI server like Uvicorn: uvicorn main:app --reload. Now you can access interactive documentation at http://127.0.0.1:8000/docs.

Step 3: Managing Dependencies

To ensure our application runs correctly in any environment, we must explicitly define its dependencies. This is typically done with a requirements.txt file.

You can generate this file using pip:

pip freeze > requirements.txt

Your requirements.txt file should look something like this:

fastapi==0.85.0

uvicorn==0.18.3

scikit-learn==1.1.2

numpy==1.23.3

joblib==1.2.0

pydantic==1.10.2

Pinning specific versions is a best practice to prevent future updates from breaking your application.

Containerization with Docker: Ensuring Consistency

We’ve built a service, but how do we package it to run reliably anywhere? The answer is containerization. Docker is the industry-standard tool for this. It allows you to package your application, its dependencies, libraries, and configuration files into a single, isolated unit called a container. This solves the “it works on my machine” problem once and for all.

Creating a Dockerfile

A Dockerfile is a text file that contains the instructions for building a Docker image. It specifies the base image, sets up the environment, copies your code, and defines the command to run the application.

Create a file named Dockerfile in your project root:

# 1. Use an official Python runtime as a parent image

# Using a 'slim' version reduces the final image size

FROM python:3.9-slim

# 2. Set the working directory inside the container

WORKDIR /app

# 3. Copy the dependencies file to the working directory

# This step is done separately to leverage Docker's layer caching

COPY requirements.txt .

# 4. Install the dependencies

RUN pip install --no-cache-dir -r requirements.txt

# 5. Copy the rest of your application code into the container

COPY . .

# 6. Expose the port the app runs on

EXPOSE 8000

# 7. Define the command to run your application

# Uvicorn is run with host 0.0.0.0 to be accessible from outside the container

CMD ["uvicorn", "main:app", "--host", "0.0.0.0", "--port", "8000"]

With this file, you can build and run your container:

# Build the Docker image

docker build -t iris-predictor-api .

# Run the Docker container

docker run -p 8000:8000 iris-predictor-api

Your API is now running inside a container, completely isolated from your host system, and can be deployed on any machine or cloud provider that supports Docker.

Beyond Deployment: MLOps, Monitoring, and Scaling

Getting your model into a containerized service is a major milestone, but the work of a production ML system is never truly done. This is where the principles of MLOps (Machine Learning Operations) come into play. MLOps is a set of practices that aims to deploy and maintain ML models in production reliably and efficiently.

Monitoring for Model Drift

Once deployed, a model’s performance can degrade over time. This is often due to:

- Data Drift: The statistical properties of the input data change. For example, a fraud detection model trained on pre-pandemic data might perform poorly on post-pandemic transaction patterns.

- Concept Drift: The relationship between the input features and the target variable changes. For example, customer purchasing behavior might change due to a new competitor entering the market.

Effective monitoring involves tracking both operational metrics (latency, CPU/memory usage, error rates) and model-specific metrics (prediction distribution, feature drift). Tools like Prometheus and Grafana are excellent for system metrics, while specialized libraries like Evidently AI or open-source tools like WhyLabs can help detect data and concept drift.

CI/CD for Machine Learning

Continuous Integration and Continuous Deployment (CI/CD) pipelines automate the process of testing and deploying new code. In an ML context, this pipeline is extended to include data validation, model retraining, and model evaluation. A typical ML CI/CD pipeline might look like this:

- A data scientist pushes new code or data to a Git repository.

- A CI server (like GitHub Actions or Jenkins) automatically triggers a workflow.

- The workflow runs data validation tests, trains a new model, and evaluates it against the current production model.

- If the new model is better, it is automatically containerized and deployed to a staging environment for final checks before being promoted to production.

Staying current with the latest MLOps tools and trends is vital; keeping an eye on **python news** and community discussions can provide significant advantages in building robust systems.

Scaling with Kubernetes

While Docker is great for running a single container, what happens when your service needs to handle thousands of requests per second? This is where a container orchestrator like Kubernetes (K8s) becomes essential. Kubernetes automates the deployment, scaling, and management of containerized applications. It can automatically scale the number of running containers up or down based on traffic, restart failed containers (self-healing), and manage rolling updates with zero downtime.

Conclusion: From Model to Value

Deploying a machine learning model is a multi-disciplinary endeavor that blends data science with software engineering and DevOps principles. We’ve journeyed from a simple trained model to a robust, containerized web service ready for production. The key takeaway is that deployment is not an afterthought but an integral part of the machine learning lifecycle that requires careful planning and the right set of tools.

By mastering serialization, building APIs with modern frameworks like FastAPI, ensuring reproducibility with Docker, and embracing MLOps practices for monitoring and automation, you can successfully bridge the gap between model development and tangible business value. The ability to reliably and efficiently put models into production is what truly distinguishes a research project from a transformative AI-powered application.