Machine Learning Model Deployment with Python – Part 2

Welcome to the second part of our in-depth series on deploying machine learning models with Python. In Part 1, we covered the foundational steps of saving a trained model and creating a basic API. Now, we venture beyond the prototype stage into the world of production-grade machine learning. The journey from a Jupyter Notebook to a robust, scalable, and reliable service is fraught with challenges, but it’s also where the true value of ML is realized. This guide will equip you with the advanced techniques and practical implementations necessary to navigate this complex landscape.

This article moves past the “hello world” of model deployment and tackles the real-world engineering problems: How do you ensure your model service is always available? How do you update a model without causing downtime? How do you know if your model’s performance is degrading in the wild? We will explore the pillars of modern MLOps, including containerization with Docker, orchestration with Kubernetes, sophisticated deployment strategies like canary releases, and the critical, often-overlooked discipline of production monitoring. By the end, you’ll understand the principles and practices that separate a fragile academic project from a resilient, enterprise-ready ML application.

The Foundation: Containerization and Model Serving

The first step in professionalizing a machine learning service is to extricate it from the developer’s machine. The infamous “it works on my machine” problem is a significant source of deployment failures. Inconsistencies in operating systems, Python versions, and library dependencies can cause a perfectly functional model to fail spectacularly in a production environment. Containerization solves this problem by packaging the application, its dependencies, and its configuration into a single, isolated, and portable unit.

Why Containerize? The Power of Docker

Docker is the de facto standard for containerization. A Docker container wraps your Python application in a complete filesystem that contains everything it needs to run: code, runtime, system tools, and libraries. This creates a predictable and consistent environment, regardless of where the container is deployed—be it a developer’s laptop, a testing server, or a cloud-based production cluster.

Key Benefits of Containerization:

- Consistency: Guarantees that the environment in development, testing, and production is identical, eliminating environment-specific bugs.

- Portability: A container can run on any system that has Docker installed, from on-premise servers to any major cloud provider (AWS, GCP, Azure).

- Isolation: Containers run in isolated processes, preventing conflicts between applications and their dependencies.

- Scalability: Lightweight containers can be spun up or down in seconds, making them perfect for scaling services based on demand.

Here is a practical example of a Dockerfile for a machine learning API built with FastAPI:

# Use an official Python runtime as a parent image

FROM python:3.9-slim

# Set the working directory in the container

WORKDIR /app

# Copy the dependencies file to the working directory

COPY requirements.txt .

# Install any needed packages specified in requirements.txt

RUN pip install --no-cache-dir -r requirements.txt

# Copy the rest of the application's code

COPY . .

# Expose the port the app runs on

EXPOSE 8000

# Command to run the application using uvicorn

CMD ["uvicorn", "main:app", "--host", "0.0.0.0", "--port", "8000"]

Choosing Your Serving Framework

While the container handles the environment, a web framework is needed to expose your model’s prediction function as an API endpoint. While many options exist, FastAPI has rapidly become a favorite in the Python community for its performance and developer-friendly features.

- Flask: A veteran micro-framework, Flask is simple, flexible, and has a massive community. It’s a great choice for simple APIs but requires more boilerplate for features like data validation.

- FastAPI: A modern, high-performance framework built on Starlette and Pydantic. Its key advantages are automatic data validation, interactive API documentation (via Swagger UI and ReDoc), and asynchronous support, which allows for high concurrency—a critical feature for ML services that may perform I/O-bound tasks like fetching features.

Here’s a minimal FastAPI example for serving a scikit-learn model:

import joblib

from fastapi import FastAPI

from pydantic import BaseModel

# Define the input data schema using Pydantic

class ModelInput(BaseModel):

feature1: float

feature2: float

feature3: list[int]

# Initialize the FastAPI app

app = FastAPI(title="ML Model API")

# Load the trained model from disk

model = joblib.load("model.pkl")

@app.post("/predict")

def predict(data: ModelInput):

# Convert input data to the format the model expects

# (This is a simplified example)

features = [data.feature1, data.feature2] + data.feature3

prediction = model.predict([features])

return {"prediction": prediction.tolist()}

Beyond Simple Deployment: Advanced Release Patterns

Once your service is containerized, the next challenge is updating it. Simply stopping the old version and starting the new one leads to downtime and introduces significant risk. What if the new model has a critical bug or performs worse on real-world data? Advanced deployment strategies are designed to mitigate these risks by enabling gradual, controlled rollouts.

Blue-Green Deployment

The Blue-Green strategy minimizes downtime and risk by maintaining two identical production environments, nicknamed “Blue” and “Green.”

- Current State: The live environment, Blue, is handling all user traffic.

- Deployment: The new version of the model service is deployed to the idle environment, Green. This environment can be thoroughly tested by internal teams without affecting any users.

- The Switch: Once the Green environment is verified, the router or load balancer is reconfigured to switch all incoming traffic from Blue to Green. This switch is nearly instantaneous.

- Rollback: If any issues arise with the Green environment, traffic can be immediately switched back to the stable Blue environment, providing a near-instantaneous rollback path.

This pattern is safe and simple to understand but can be resource-intensive as it requires maintaining double the infrastructure capacity.

Canary Releases

A Canary Release is a more nuanced approach where the new version is gradually rolled out to a small subset of users. The name comes from the “canary in a coal mine” analogy—if something goes wrong, it affects only a small group of users (the canaries), limiting the “blast radius.”

The process involves:

- Deploying the new version (the “canary”) alongside the stable version.

- Directing a small percentage of traffic (e.g., 1%, 5%) to the canary.

- Closely monitoring key metrics for the canary group: error rates, latency, and model performance.

- If the canary performs well, gradually increase the traffic it receives until it handles 100% of the load, at which point the old version can be decommissioned.

This technique is excellent for catching unforeseen problems with real user traffic but requires more sophisticated traffic routing and monitoring infrastructure, often managed by a service mesh like Istio or Linkerd in a Kubernetes environment.

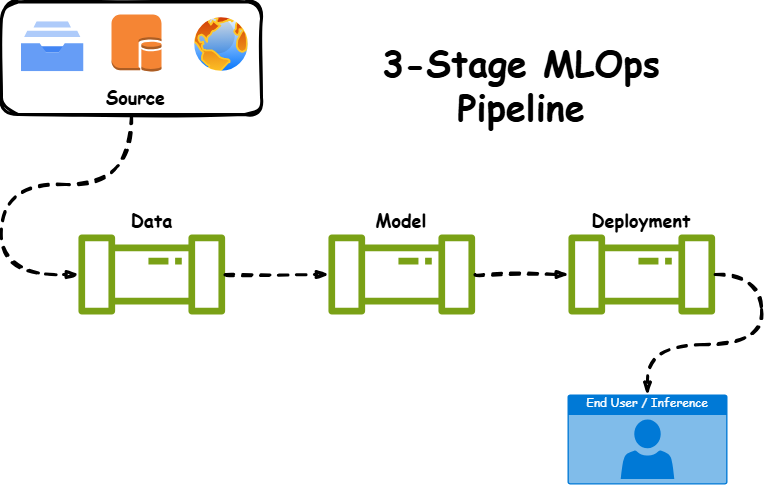

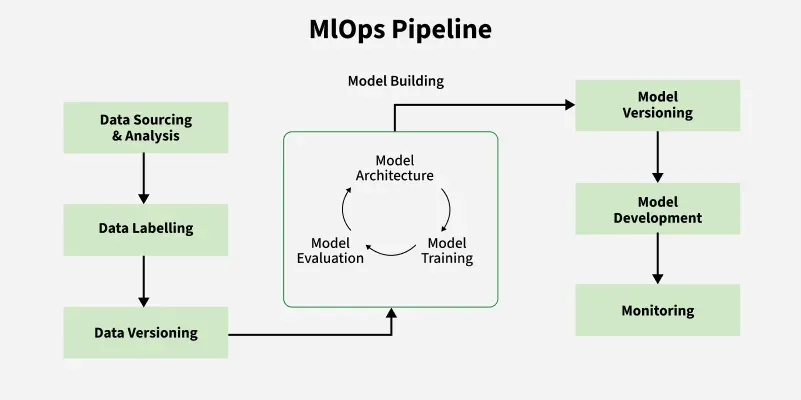

Closing the Loop: Monitoring, Logging, and MLOps

Deploying a model is not the final step; it’s the beginning of its operational lifecycle. Models are not static artifacts; their performance can and will degrade over time. A comprehensive monitoring strategy is essential for maintaining a healthy and effective ML system. The rise of this discipline is a constant topic in **python news** and the wider MLOps community.

The Three Pillars of ML Monitoring

Effective monitoring for ML systems can be broken down into three key areas:

- Operational Health: These are standard software engineering metrics. Are your API endpoints responding correctly? What is the request latency and throughput? Are you seeing HTTP 500 errors? What is the CPU and memory utilization of your service? Tools like Prometheus for metrics collection and Grafana for visualization are the industry standard here.

- Data Drift: This occurs when the statistical properties of the data your model sees in production differ significantly from the data it was trained on. For example, if a recommendation model was trained on user behavior during a holiday season, its input data will look very different in the middle of the year. Data drift is a leading indicator that model performance may soon degrade. It can be detected by monitoring distributions, means, and variances of input features.

- Concept Drift (Model Drift): This is a more fundamental problem where the relationship between the input features and the target variable changes over time. A classic example is a fraud detection model that becomes less effective as fraudsters invent new techniques. Detecting concept drift often requires a source of ground-truth labels for production data, allowing you to track metrics like accuracy, precision, and recall over time. When these metrics drop below a certain threshold, it’s a clear signal that the model needs to be retrained.

Handling the Load: Scaling Strategies

As your application gains traction, your ML service will need to handle increasing traffic. A scaling strategy ensures that your service remains responsive and available under heavy load while also being cost-effective during quiet periods.

Horizontal vs. Vertical Scaling

There are two fundamental approaches to scaling:

- Vertical Scaling (Scaling Up): This involves increasing the resources of a single server—adding more CPU cores, RAM, or a more powerful GPU. It’s simple to implement but has a hard physical limit and can become prohibitively expensive. It also represents a single point of failure.

- Horizontal Scaling (Scaling Out): This involves adding more instances (servers or containers) of your application and distributing the load between them using a load balancer. This is the preferred method for modern cloud-native applications. It is highly flexible, resilient to individual instance failures, and can scale almost indefinitely.

Auto-scaling with Kubernetes

Kubernetes, the leading container orchestration platform, excels at horizontal scaling. The Horizontal Pod Autoscaler (HPA) is a core Kubernetes component that automatically scales the number of pods (running container instances) in a deployment based on observed metrics.

You can configure an HPA to monitor the average CPU utilization across all pods. If the utilization exceeds a target threshold (e.g., 70%), the HPA will automatically create new pods to distribute the load. Conversely, if utilization drops, it will terminate unneeded pods to save resources and costs. This elastic scaling is crucial for applications with variable traffic patterns, ensuring both high performance during peaks and cost efficiency during lulls.

Conclusion

Transitioning a machine learning model from a research environment to a live production system is a complex engineering endeavor that extends far beyond the initial training process. We’ve explored the critical components of this journey: using Docker for creating consistent and portable environments, leveraging FastAPI for high-performance model serving, implementing advanced deployment strategies like Blue-Green and Canary releases to minimize risk, and establishing a robust monitoring framework to track operational health and model drift. Finally, we discussed how platforms like Kubernetes enable the automatic scaling required to meet real-world demand.

The key takeaway is that production ML is a continuous lifecycle, not a one-time event. It requires a blend of data science, software engineering, and DevOps principles—a discipline now known as MLOps. By embracing these advanced techniques, you can build machine learning systems that are not only intelligent but also resilient, scalable, and truly valuable to your organization.