I Dropped Pandas for Polars. Here’s What Broke.

It was 11 PM on a Thursday last month. My data pipeline running on a t3.xlarge AWS instance crashed for the fourth time that week. The culprit? Good old MemoryError. Pandas was trying to merge a 14GB parquet file with a 6GB lookup table, and the server just gave up and died.

I spent an hour trying to chunk the data. Then I tried downcasting the datatypes. Nothing worked reliably. That is exactly the kind of thing that makes you want to throw your laptop out the window.

So I finally bit the bullet and rewrote the job using Polars.

I had been ignoring it for a while. Learning a new syntax when you have muscle memory for df.apply() feels like a massive chore. But the rewrite took me maybe two hours. When I ran the new script, it chewed through the exact same 20GB dataset in 47 seconds. No memory spikes. No crashes. It just finished.

Why Your Pandas Code is Choking

If you work with data in Python, you already know the pain points. Pandas is single-threaded. When you tell it to group a dataset by a specific column, it uses exactly one core on your machine while the other seven sit there doing absolutely nothing.

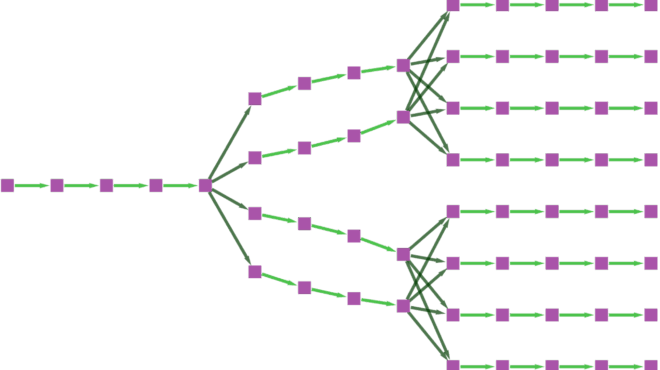

Polars is written in Rust and uses Apache Arrow as its memory model. It doesn’t just process data faster; it processes it smarter. It distributes the workload across all available CPU cores automatically.

But the real reason it didn’t crash my server is lazy evaluation.

When you write a Pandas script, every single line executes immediately. Filter a dataframe? It creates a new copy in memory. Drop a column? Another copy. Polars doesn’t do that unless you explicitly tell it to. Instead, it builds a query plan.

The Syntax Shift (And The Gotchas)

You can’t just import polars as pd and call it a day. The API is entirely different, heavily inspired by SQL and PySpark.

Here is what a typical transformation looks like now. Notice we are using scan_parquet instead of read_parquet. That single word difference is what triggers the lazy evaluation.

import polars as pl

# This doesn't load the data. It just reads the schema.

query = (

pl.scan_parquet("s3://my-bucket/massive_dataset.parquet")

.filter(pl.col("status") == "active")

.with_columns(

(pl.col("revenue") - pl.col("cost")).alias("profit")

)

.group_by("region")

.agg([

pl.col("profit").sum().alias("total_profit"),

pl.col("user_id").n_unique().alias("unique_users")

])

)

# NOTHING has happened yet.

# Polars looks at the whole chain, optimizes it, and executes only what's needed.

result_df = query.collect()When you call .collect(), Polars looks at your entire chain of commands. It realizes, “Oh, you only need the status, revenue, cost, region, and user_id columns.” It won’t even load the other 50 columns from the disk. Pandas would have loaded all 55 columns into RAM, filtered the rows, and then dropped the ones you didn’t need. It’s wildly inefficient.

My Real-World Benchmarks

I don’t trust synthetic benchmarks from official documentation. They always pick the perfect datasets. So I tested this on my own messy data using my M3 MacBook Pro (36GB RAM) running Python 3.12.2 and Polars 1.5.2.

The task: Load a 25-million row CSV, filter out invalid dates, extract the month, group by category, and calculate the mean and standard deviation of sales.

- Pandas 2.2.0: 42.4 seconds (and spiked memory to 18GB)

- Polars (Eager mode): 3.8 seconds

- Polars (Lazy mode): 0.9 seconds (memory never exceeded 2.5GB)

Going from 42 seconds to under a second is ridiculous. It completely changes how you interact with your data. You stop writing intermediate files to disk just to save progress. You just run the whole pipeline from scratch every time because it’s fast enough.

Where It Still Hurts

I’m not going to pretend the transition is entirely smooth. I ran into a few incredibly frustrating walls.

First, Polars has no concept of an index. If your entire workflow relies on Pandas’ df.set_index() and merging on indices, you have to unlearn that. You just use standard relational joins now. Honestly, this is probably a good thing—Pandas multi-indexes are a nightmare anyway—but it requires a mental shift.

Second, working with deeply nested JSON is still clunky. Earlier in February, I had to parse an API response where every row contained a list of dictionaries, which themselves contained lists. Pandas’ json_normalize handles this poorly, but it handles it. Unnesting complex structs in Polars requires chaining multiple explode() and unnest() calls that get ugly fast.

Third, third-party library support. If you use older machine learning packages that strictly expect Pandas objects, you’ll have to call .to_pandas() at the very end of your pipeline. It works, but it feels dirty.

What Happens Next

The writing is on the wall for default single-threaded data processing. The hardware we use has changed, and our libraries are finally catching up.

I expect that by Q3 2027, major cloud providers will start making Polars the default runtime environment for serverless data functions. The cold start and memory advantages are just too massive for AWS or GCP to ignore. When you pay by the gigabyte-second for Lambda execution, cutting memory usage by 80% translates to immediate, massive cost savings.

If you are still fighting memory limits or waiting minutes for simple aggregations to finish, stop trying to optimize your Pandas code. You are fighting the framework. Just rewrite your heaviest pipeline in Polars.