Building Python Agents That Actually Fix Themselves

Actually, I should clarify — I spent three hours last Tuesday fixing a daily cron job because a vendor changed a single <div class="wrapper"> to <div class="container-fluid"> on their pricing page. Three hours of my life, gone, tracing a generic NoneType object has no attribute 'text' error through a massive BeautifulSoup script.

Traditional Python automation is incredibly brittle. You write a script, it does exactly what you tell it to do, and the second the environment shifts by a single pixel, the whole thing crashes. But I ripped out the static scrapers and replaced them with an agentic loop. Instead of giving Python a rigid set of steps, I gave it a goal, a set of tools, and an LLM to figure out the routing. It cut my weekly triage time from about 3 hours to maybe 12 minutes.

And here is how you actually build one of these from scratch without getting buried in heavy framework abstractions.

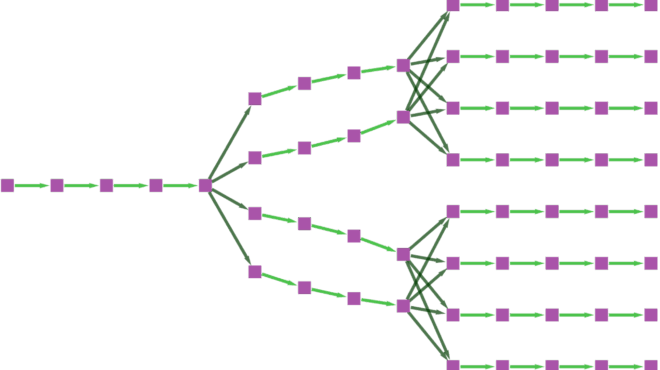

The Core Agent Architecture

Most people overcomplicate agents. At its core, an agent is just a while loop. It observes a state, decides if it needs to use a tool, uses the tool, and checks if it reached the goal. If yes, it stops. If no, it loops.

I run this on a t4g.small AWS instance using Python 3.12.4. You don’t need a massive machine for the orchestration part—all the heavy lifting happens on the API side. We’ll use the standard openai package and pydantic to force structured data outputs.

import json

import os

from typing import List, Optional

from pydantic import BaseModel, Field

from openai import OpenAI

client = OpenAI(api_key=os.environ.get("OPENAI_API_KEY"))

class ExtractedData(BaseModel):

company_name: str

pricing_tiers: List[str]

has_enterprise_plan: bool

confidence_score: float = Field(ge=0.0, le=1.0)

class SimpleAgent:

def __init__(self, system_prompt: str):

self.system_prompt = system_prompt

self.messages = [{"role": "system", "content": system_prompt}]

def add_user_message(self, content: str):

self.messages.append({"role": "user", "content": content})

def run_extraction(self) -> Optional[ExtractedData]:

# The agent loop

max_retries = 3

attempts = 0

while attempts < max_retries:

try:

response = client.beta.chat.completions.parse(

model="gpt-4o",

messages=self.messages,

response_format=ExtractedData,

temperature=0.1

)

parsed_result = response.choices[0].message.parsed

# If confidence is too low, we force it to try again

if parsed_result.confidence_score < 0.7:

self.messages.append({

"role": "user",

"content": f"Confidence too low ({parsed_result.confidence_score}). Re-evaluate the source text and try again."

})

attempts += 1

continue

return parsed_result

except Exception as e:

print(f"Extraction failed: {str(e)}")

attempts += 1

return NoneNotice the confidence score check. That right there is the difference between a basic API call and an agentic workflow. The script evaluates its own output and decides whether to accept it or kick it back for revision.

Processing Messy Inputs

Data in the real world is garbage. You’re rarely handing your agent a clean JSON payload. Usually, it’s raw HTML, a badly formatted CSV, or an email thread. But I use a lightweight processing class to strip the noise before passing it to the LLM.

import re

from bs4 import BeautifulSoup

class DataProcessor:

@staticmethod

def clean_html_payload(raw_html: str) -> str:

"""Strips scripts, styles, and excessive whitespace."""

soup = BeautifulSoup(raw_html, 'html.parser')

# Kill JavaScript and CSS

for script in soup(["script", "style", "meta", "noscript"]):

script.decompose()

text = soup.get_text(separator=' ')

# Collapse multiple spaces and newlines

text = re.sub(r'\s+', ' ', text).strip()

# Chunk it if it's still too massive

# 12000 chars is roughly 3000 tokens depending on the text

return text[:12000]

@staticmethod

def process_batch(html_list: List[str]) -> List[str]:

return [DataProcessor.clean_html_payload(doc) for doc in html_list]Combine these two, and you have an automation pipeline that doesn’t care if a developer changes their CSS classes. The agent reads the text, understands the semantic meaning, and maps it to your Pydantic schema anyway.

The Infinite Loop Trap (And How I Burned $14)

The docs don’t warn you about this, but you need to be extremely careful with autonomous retry logic. I made a typo in an API endpoint URL, and the agent got trapped in an infinite retry loop that racked up a $14 bill in four minutes before I caught it.

Always implement a hard stop. Here is the circuit breaker pattern I use now for any agent that has access to external tools.

import time

class CircuitBreaker:

def __init__(self, max_failures: int = 3, reset_window: int = 60):

self.max_failures = max_failures

self.reset_window = reset_window

self.failures = 0

self.last_failure_time = 0

def record_failure(self):

self.failures += 1

self.last_failure_time = time.time()

def is_open(self) -> bool:

if self.failures >= self.max_failures:

# Check if the window has expired

if time.time() - self.last_failure_time > self.reset_window:

self.failures = 0 # Reset

return False

return True

return False

# Usage inside your agent tool execution:

breaker = CircuitBreaker()

def execute_tool(tool_name, params):

if breaker.is_open():

raise Exception("Circuit breaker open. Halting agent execution to prevent loop.")

try:

# tool execution logic here

pass

except Exception:

breaker.record_failure()

raiseWhere This Is Going

Writing raw regex for data extraction is a dying practice. But the trade-off right now is latency. A BeautifulSoup script parses a page in 40 milliseconds. My agent takes about 2.5 seconds to do the same job. But I’ll gladly trade two seconds of compute time to get my Tuesday mornings back.