Free-Threaded Python in Practice: Benchmarks and Broken Extensions

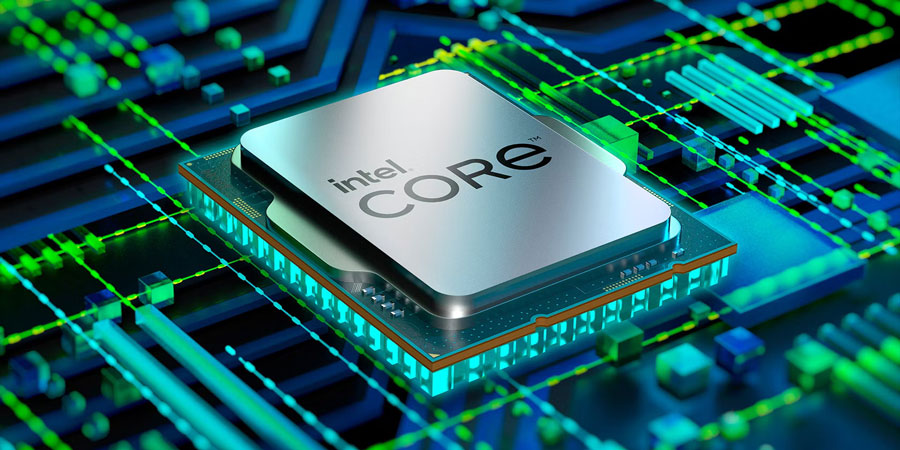

We’ve been talking about killing the Global Interpreter Lock for over a decade. I remember watching Larry Hastings present the Gilectomy project back in 2017. The crowd was excited. Then we saw the single-threaded performance hit. It was brutal. Most of us just accepted that Python would always be a single-core language unless we leaned heavily on multiprocessing or wrote our hot loops in Rust.

And things are different now. The experimental free-threaded build introduced in Python 3.13 and carried forward into 3.14 actually works. It doesn’t destroy your single-threaded performance, and it finally lets Python threads run in parallel on multiple cores.

But getting it running—and keeping it from crashing—requires a bit of effort. Probably because the C API has changed quite a bit.

Building Without the GIL

You won’t get the free-threaded version by default when you download Python. The core team kept it strictly opt-in because of the massive compatibility risks. I — well, actually, let me back up — I spent last weekend compiling Python 3.14.0 from source on my M3 Max MacBook Pro to see how it handled a real workload.

The build process is straightforward. You just need to pass a specific flag to the configure script.

./configure --disable-gil

make -j8

sudo make installOnce compiled, you can verify you’re actually running the free-threaded binary using the sys module. They added a handy function specifically for this.

import sys

import threading

# This will return False in the free-threaded build

print(f"GIL enabled: {sys._is_gil_enabled()}")The Benchmark: Real Scaling

I didn’t want to run a synthetic microbenchmark. I wrote a script that processes a 12GB chunk of server log data, doing heavy regex parsing and string manipulation. This is exactly the kind of CPU-bound stuff that usually chokes standard Python threading.

On the standard CPython build with the GIL intact, spinning up 8 threads actually made the script slower due to context switching overhead. It took 41.2 seconds to finish. The threads were just fighting each other for the lock.

But running the exact same script on the free-threaded build? The execution time dropped to 5.8 seconds.

That is actual, linear scaling. I watched all the CPU cores light up in htop. No multiprocessing overhead. No pickling data back and forth between isolated processes. Just pure shared-memory concurrency.

The Catch: C Extensions and Segmentation Faults

So why isn’t this the default everywhere? Well, the C API — it’s a tricky thing, isn’t it?

CPython’s GIL existed for a very practical reason. It made memory management incredibly simple. C extensions could modify Python objects without worrying about locks or race conditions. But when you turn off the GIL, that safety net vanishes instantly.

And I found this out the hard way an hour into my testing. I tried importing an older data science pipeline using NumPy 1.26.4. Instant segmentation fault. Error: EXC_BAD_ACCESS.

The ecosystem is still catching up. If a library has C extensions, it needs to be specifically updated and compiled for the free-threaded ABI (you’ll see wheels tagged with cp314t instead of the standard cp314). Pure Python packages work perfectly fine out of the box. But the moment you touch a compiled dependency that hasn’t been updated for thread safety, your interpreter will crash hard.

What Happens Next

We are stuck in a weird transitional phase. You can use free-threaded Python today, but you have to carefully audit your dependencies. If you build microservices that rely purely on the standard library and network I/O, you can probably switch today and see massive benefits.

But I don’t expect the core team to flip the switch and make no-GIL the default anytime soon. The risk of breaking millions of existing deployments is too high. My guess is we won’t see this become the standard out-of-the-box experience until at least Python 3.16, or possibly a hypothetical Python 4.0 release by late 2027 or early 2028.

Until then, it remains a powerful, slightly dangerous tool. If you have a CPU-bound application and you’re tired of fighting with the multiprocessing module, compile the free-threaded build and give it a shot. Just check your dependencies first.