Python News: LangChain and LangGraph 1.0 Usher in a New Era for AI Agent Development

The world of AI is moving at a breakneck pace, and for Python developers, the landscape of building intelligent agents has just undergone a seismic shift. The ability to create autonomous systems that can reason, use tools, and interact with their environment is no longer a futuristic concept but a present-day reality. However, developers have often grappled with the complexity of orchestrating these agents, managing their state, and moving them from clever prototypes to production-ready applications. This latest python news marks a significant milestone: the stable 1.0 releases of the LangChain and LangGraph libraries, which promise to solve these very challenges.

These updates are not merely incremental; they represent a fundamental rethinking of how developers can construct, control, and deploy AI agents. By offering a more modular, flexible, and powerful set of tools, these libraries are empowering a new generation of agent builders. This article dives deep into what these 1.0 releases mean for the Python community, exploring the new capabilities, providing practical code examples, and outlining best practices for building the next breakthrough in AI with these production-ready frameworks.

The Evolution of Agent Frameworks: What’s New in 1.0?

The journey to version 1.0 has been shaped by extensive community feedback, leading to a more robust and developer-centric ecosystem. The core philosophy has shifted from providing high-level, opinionated abstractions to offering powerful, low-level primitives that grant developers maximum control and flexibility. This maturation is evident across the entire stack, from the core library to the new orchestration engine.

LangChain Core: A More Flexible and Stable Foundation

One of the most significant changes is the stabilization of langchain-core. This package now provides a solid foundation with standardized interfaces and data structures, ensuring backward compatibility and a more predictable development experience. A key innovation here is the introduction of standard content blocks.

Previously, integrating different model providers could be cumbersome due to inconsistent message formats. Now, a standard set of message types (HumanMessage, AIMessage, ToolMessage) works seamlessly across any supported model. This decouples your application logic from the specific LLM provider, mitigating vendor lock-in and making your code more portable.

Furthermore, creating simple, tool-using agents has been streamlined with a new create_agent template. This provides a clear and flexible starting point without hiding the underlying logic, making it easier to customize and debug.

LangGraph: Production-Ready Cyclical Computation

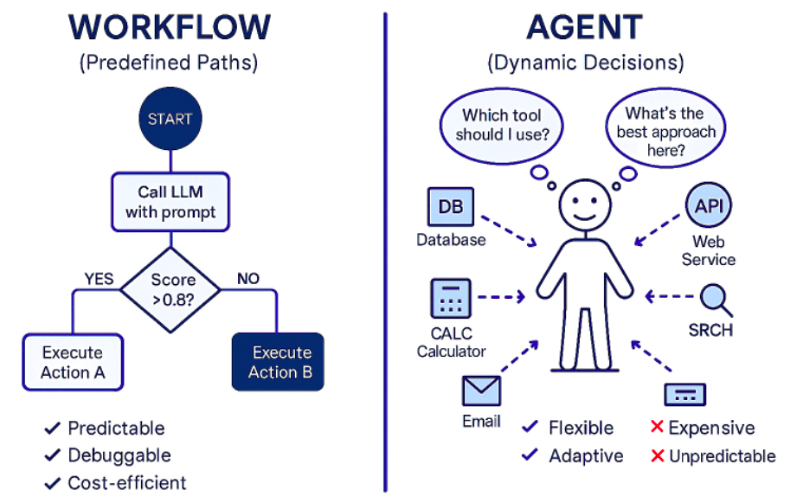

While LangChain excels at creating chains (Directed Acyclic Graphs), many advanced agent behaviors require cycles. An agent might need to use a tool, reflect on the result, use another tool, and repeat this loop until it reaches a satisfactory answer. This is where LangGraph comes in, and its 1.0 release makes it ready for prime time.

LangGraph is a library for building stateful, multi-actor applications with LLMs by modeling them as graphs. Its key features are game-changers for production systems:

Durable Execution: LangGraph can automatically persist the state of your agent at every step. If a long-running task fails or needs to be paused, it can be resumed from the exact point it left off, preventing loss of work and context.

–Human-in-the-Loop: You can explicitly define points in the graph where execution should pause and await human input. This is critical for applications requiring oversight, verification, or collaboration between humans and AI.

–Streaming: Intermediate results and steps can be streamed back to the user in real-time, creating a more responsive and transparent user experience.

–Low-Level Control: Unlike older, more rigid agent executors, LangGraph allows you to define custom agent runtimes with explicit nodes and edges, giving you complete control over the agent’s decision-making loop.

Unified Ecosystem and Documentation

To support this new era of development, all documentation for Python, TypeScript, LangChain, LangGraph, and the observability platform LangSmith has been consolidated into a single, unified hub. This makes it significantly easier for developers to find the information they need and understand how the different components of the ecosystem fit together.

Keywords:

AI data flow diagram – Flow chart of adaptive data acquisition | Download Scientific Diagram

Deep Dive: Building a Multi-Step Research Agent with LangGraph

To truly appreciate the power of LangGraph, let’s build a practical example: a research assistant agent. This agent will take a user’s question, search the web for information, decide if the information is sufficient, and if not, refine its search or ask the user for clarification. This cyclical reasoning is a perfect use case for LangGraph.

Step 1: Setting Up the Environment and Tools

First, ensure you have the necessary libraries installed. We’ll use Tavily for web search, which requires an API key.

# pip install langchain langgraph langchain_openai tavily-python

import os

from langchain_openai import ChatOpenAI

from langchain_community.tools.tavily_search import TavilySearchResults

from typing import TypedDict, Annotated, Sequence

import operator

from langchain_core.messages import BaseMessage

# Set API keys

os.environ[“OPENAI_API_KEY”] = “YOUR_OPENAI_API_KEY”

os.environ[“TAVILY_API_KEY”] = “YOUR_TAVILY_API_KEY”

# Define our tool

tool = TavilySearchResults(max_results=2)

tools = [tool]

# Initialize the model

model = ChatOpenAI(temperature=0, streaming=True)

model_with_tools = model.bind_tools(tools)

Step 2: Defining the Graph State

The state is the central data object that is passed between the nodes of our graph. It holds all the information the agent needs to track its progress. We’ll use a TypedDict to define its structure.

class AgentState(TypedDict):

messages: Annotated[Sequence[BaseMessage], operator.add]

Our state is simple: it’s just a sequence of messages that we will append to over time. This message history will serve as the agent’s memory.

Step 3: Creating the Graph Nodes

Nodes are the fundamental units of work in the graph. They are Python functions that take the current state as input and return an update to the state.

from langgraph.prebuilt import ToolInvocation

from langchain_core.messages import ToolMessage

import json

# Node 1: The agent model that decides what to do

def call_model(state):

messages = state[“messages”]

response = model_with_tools.invoke(messages)

# We return a list, because this will get added to the existing list

return {“messages”: [response]}

# Node 2: The function that executes our tools

def call_tool(state):

last_message = state[“messages”][-1] # Get the last AI message

# Logic to call the tool

tool_invocations = []

for tool_call in last_message.tool_calls:

tool_name = tool_call[“name”]

if tool_name == “tavily_search_results_json”:

action = ToolInvocation(

tool=tool,

tool_input=tool_call[“args”],

)

tool_invocations.append(action)

# We invoke the tools

responses = tool.batch(tool_invocations, return_exceptions=True)

# We format the responses as ToolMessages

tool_messages = [

ToolMessage(

content=json.dumps(res),

tool_call_id=tool_call[“id”],

)

for res, tool_call in zip(responses, last_message.tool_calls)

]

return {“messages”: tool_messages}

Step 4: Defining Edges and Conditional Logic

This is where the magic of LangGraph shines. We need a conditional edge that decides the next step after the model makes a prediction. Should it call a tool, or is it ready to respond to the user?

# The conditional edge function

def should_continue(state):

last_message = state[“messages”][-1]

# If there are no tool calls, we are done

if not last_message.tool_calls:

return “end”

# Otherwise, we call the tool

else:

return “continue”

Step 5: Compiling and Running the Graph

Finally, we assemble our nodes and edges into a runnable graph.

from langgraph.graph import StateGraph, END

from langchain_core.messages import HumanMessage

# Define the graph

workflow = StateGraph(AgentState)

# Add the nodes

workflow.add_node(“agent”, call_model)

workflow.add_node(“action”, call_tool)

# Set the entry point

workflow.set_entry_point(“agent”)

# Add the conditional edge

workflow.add_conditional_edges(

“agent”,

should_continue,

{

“continue”: “action”,

“end”: END,

},

)

# Add a normal edge to loop back from action to agent

workflow.add_edge(“action”, “agent”)

# Compile the graph into a runnable app

app = workflow.compile()

# Let’s run it!

inputs = {“messages”: [HumanMessage(content=”What is the latest python news regarding AI agent frameworks?”)]}

for output in app.stream(inputs):

# stream() yields dictionaries with output from the last step

for key, value in output.items():

print(f”Output from node ‘{key}’:”)

print(“—“)

print(value)

print(“\n—\n”)

When you run this code, you’ll see the agent first call the model, which decides to use the Tavily search tool. The graph then transitions to the `action` node, executes the search, and loops back to the `agent` node with the search results. The model then synthesizes the results into a final answer, and since there are no more tool calls, the graph transitions to the `END` state.

Keywords:

AI data flow diagram – Flow diagram depicting the process from collecting images to …

Practical Implications and Best Practices for Agent Developers

The stability and power of these new tools have profound implications for developers. The barrier between a clever Jupyter Notebook prototype and a scalable, reliable production application has been significantly lowered.

From Prototypes to Production

LangGraph’s built-in durability is a critical feature for production. By connecting a `Checkpointer` (e.g., using SQLite or Redis), your agent’s state is saved at every step. This means long-running, complex tasks can survive crashes and be resumed seamlessly. When combined with LangSmith for tracing and debugging, you have a full-stack solution for building, monitoring, and maintaining production-grade AI agents.

Best Practices for Building with LangGraph

Design a Clear State: The state dictionary is the lifeblood of your agent. Design it carefully. Keep it serializable (e.g., using basic Python types, Pydantic models) so it can be easily persisted.

–Embrace Modularity: Think of each node as a distinct, testable unit of logic. This makes your graph easier to understand, debug, and extend. A node could be an LLM call, a tool execution, or even a simple data transformation function.

–Use Human-in-the-Loop Strategically: Don’t just build fully autonomous agents. Identify critical decision points where human oversight adds value. LangGraph makes it trivial to add an interruption point before executing a potentially costly or irreversible action, like sending an email or writing to a database.

–Prioritize Observability: From day one, use tools like LangSmith to trace your agent’s execution. Understanding why your agent made a particular decision or which tool it used is impossible without proper tracing, especially in complex, cyclical graphs.

Common Pitfalls to Avoid

Overly Complex “Spaghetti” Graphs: While LangGraph allows for cycles, it’s easy to create a graph that’s impossible to follow. Keep your logic clean and use conditional edges judiciously. If a graph becomes too complex, consider breaking it down into sub-graphs.

–Ignoring State Management: Forgetting to configure a checkpointer for any non-trivial agent is a recipe for disaster. You will lose progress and frustrate users if your application can’t recover from interruptions.

The Broader Python AI Ecosystem: A Comparative Look

Keywords:

AI data flow diagram – How to make data “AI-ready”? – msandbu.org

These updates position LangChain and LangGraph as a powerful, general-purpose framework for agent development, distinct from older, more constrained approaches.

LangGraph vs. Traditional Agent Executors

Previous agent frameworks often relied on “executors” (like ReAct or Self-Ask) that implemented a fixed, linear logic for tool use. While effective for simple tasks, they were difficult to customize. If you wanted to add a reflection step or a human approval checkpoint, you often had to dig deep into the library’s internal code.

LangGraph is fundamentally different. It doesn’t prescribe a specific agent logic. Instead, it provides the building blocks—nodes and edges—for you to define your own custom logic. This represents a shift from using pre-built agents to building bespoke agent runtimes tailored to your exact needs.

Recommendations for Developers

For Simple Tool-Using Agents: If your goal is to have an LLM answer questions using a small set of tools in a straightforward manner, the new create_agent function in LangChain is the perfect starting point. It’s easy to use and provides a solid foundation.

–For Complex, Multi-Step Workflows: When you need cycles, sophisticated state management, human intervention, or a highly customized decision-making process, LangGraph is the clear choice. It is designed for building robust, stateful agents that can handle ambiguity and complex tasks.

–For All Production Systems: Any agent destined for production should be built with observability in mind. The combination of LangGraph for orchestration and LangSmith for monitoring provides a comprehensive solution for deploying reliable AI applications.

Conclusion: A New Chapter for Python AI Developers

The 1.0 releases of LangChain and LangGraph are more than just a version number bump; they signify the maturation of the Python AI agent ecosystem. By providing a stable, flexible, and powerful core, these libraries are moving the community beyond simple demos and into the realm of robust, production-ready applications. The focus on developer control with LangGraph’s low-level primitives, combined with the safety nets of durability and human-in-the-loop functionality, provides the ideal toolkit for tackling real-world problems.

This latest python news is a call to action for developers. The tools are now more powerful and accessible than ever. Whether you are building a sophisticated research assistant, a collaborative coding partner, or a complex automation system, the foundation has been laid. The next breakthrough in AI agents is waiting to be built, and with these new tools, Python developers are perfectly equipped to build it.