Python in Excel Gets an AI Upgrade: The Future of Spreadsheet Data Analysis

For decades, a friendly rivalry has existed in the world of data analysis. On one side stands Microsoft Excel, the undisputed champion of business users, revered for its accessibility, intuitive grid interface, and powerful formula engine. On the other side is Python, the darling of data scientists and developers, celebrated for its vast ecosystem of libraries for statistical modeling, machine learning, and complex data manipulation. Professionals often had to choose their tool or build cumbersome bridges between the two. The latest python news, however, signals a monumental shift in this landscape. The deep, native integration of Python into Excel is here, and it’s being supercharged by AI assistants.

This convergence is more than just a new feature; it’s a paradigm shift that promises to democratize data science and redefine productivity. By allowing AI to generate and execute Python code directly within a spreadsheet, complex analytical tasks that once required specialized skills and hours of work can now be accomplished with simple, natural language prompts. This article delves into the technical underpinnings of this powerful fusion, explores its practical applications with real-world code examples, and discusses the profound implications for the future of data analysis for everyone from business analysts to seasoned data scientists.

The Convergence of Titans: How Python Works Inside Excel

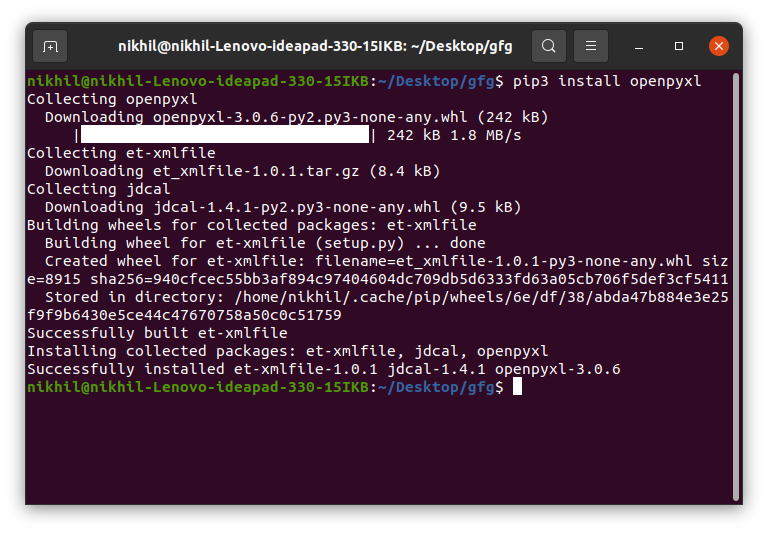

The integration of Python into Excel represents a fundamental architectural change, moving far beyond the capabilities of previous third-party add-ins. In the past, connecting these two worlds required tools like xlwings or openpyxl, which necessitated local Python installations, environment management, and often complex scripting to shuttle data back and forth. This new approach eliminates that friction entirely.

From Add-ins to Native Cloud Integration

The modern implementation of Python in Excel is built on a secure, cloud-based architecture. When you type a Python formula into a cell, the code and relevant workbook data are sent to a secure container running in the Microsoft Cloud. This container comes pre-configured with a standard Anaconda distribution of Python, providing out-of-the-box access to a curated list of essential data science libraries. This means no local installation of Python or its packages is required, ensuring consistency and security across all users. The code executes in this isolated environment, and the results—be it a data table, a statistical summary, or a data visualization—are streamed back directly into your spreadsheet.

The Core Components: Pandas DataFrames and the PY Function

The magic of this integration lies in its seamless handling of data structures. The gateway to this entire ecosystem is the new Excel function: =PY(). Anything inside these parentheses is treated as Python code. The most crucial concept to grasp is how Excel data is translated for Python’s use. When you reference an Excel range or a Table, it is automatically converted into a Pandas DataFrame—the de facto standard for data manipulation in Python.

Let’s consider a simple, practical example. Imagine you have sales data organized in an Excel Table named ‘SalesData’ with columns for ‘Region’, ‘Product’, and ‘Sales’. To calculate the total sales for each region, you would enter the following into a cell:

=PY(

import pandas as pd

# The xl() function references Excel data.

# Here, it grabs the entire 'SalesData' table.

# Excel automatically converts this into a Pandas DataFrame.

df = xl("SalesData[#All]", headers=True)

# Use standard Pandas functions for data manipulation.

# Here, we group by 'Region' and sum the 'Sales'.

regional_sales = df.groupby('Region')['Sales'].sum().reset_index()

# The last line of the script is the return value.

# This DataFrame is sent back to Excel and spills into adjacent cells.

regional_sales

)

The result of this formula is not a single value but a new table in Excel showing each region and its corresponding total sales. This elegant conversion between Excel ranges and Pandas DataFrames is the cornerstone that makes the entire system intuitive and powerful.

Supercharging Analysis with AI and Python

While writing Python code directly in Excel is a massive leap forward, the latest evolution is the integration of AI assistants that act as a natural language interface to this powerful engine. This layer of abstraction allows users to describe the analysis they want to perform, and the AI generates the necessary Python code on their behalf. This dramatically lowers the barrier to entry, empowering users who understand the business questions but may not be proficient Python programmers.

The Role of AI Assistants in the Analytical Workflow

The workflow is designed for speed and transparency. A user starts with a dataset in Excel and a question in mind. They activate the AI assistant and type a prompt in plain English. The AI then performs several steps behind the scenes:

- Intent Recognition: It parses the natural language prompt to understand the user’s analytical goal (e.g., summarize, visualize, forecast, classify).

- Data Contextualization: It identifies the relevant data range or Table the user is referring to.

- Code Generation: It writes the Python code required to perform the analysis using libraries like Pandas for manipulation, Matplotlib or Seaborn for visualization, and Statsmodels or Scikit-learn for modeling.

- Execution and Output: The generated code is placed into a

=PY()cell and executed in the cloud. The result is returned to the Excel grid. - Transparency: Crucially, the user can always inspect, modify, and re-run the generated Python code, providing a perfect learning tool and ensuring the analysis is not a “black box.”

A Practical Example: From Sales Data to Actionable Forecast

Let’s walk through a more advanced, real-world scenario. A business analyst has a table named ‘TransactionHistory’ containing columns for `Date`, `Category`, and `Revenue`. They need to understand monthly revenue trends and generate a forecast for the upcoming quarter.

The User’s Prompt: “Analyze the monthly revenue from the TransactionHistory table, visualize the historical trend, and create a 3-month forecast.”

The AI assistant would process this request and generate a Python script similar to the following:

# This code is generated by the AI and placed in a =PY() cell.

import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sns

from statsmodels.tsa.holtwinters import ExponentialSmoothing

# 1. Reference the Excel table and load it as a DataFrame

df = xl("TransactionHistory[#All]", headers=True)

# 2. Perform data preprocessing

df['Date'] = pd.to_datetime(df['Date'])

df.set_index('Date', inplace=True)

# 3. Aggregate data to the required level (monthly revenue)

monthly_revenue = df['Revenue'].resample('M').sum()

# 4. Build and fit a time-series forecasting model

# Using Holt-Winters Exponential Smoothing for trend and seasonality

model = ExponentialSmoothing(

monthly_revenue,

trend='add',

seasonal='add',

seasonal_periods=12

).fit()

# 5. Generate the forecast for the next 3 periods (months)

forecast_values = model.forecast(3)

# 6. Create a clear visualization

sns.set_theme(style="whitegrid")

fig, ax = plt.subplots(figsize=(12, 7))

monthly_revenue.plot(ax=ax, label='Historical Monthly Revenue', marker='o', linestyle='-')

forecast_values.plot(ax=ax, label='Forecasted Revenue', marker='x', linestyle='--')

ax.set_title('Monthly Revenue: Historical Trend and 3-Month Forecast', fontsize=16)

ax.set_xlabel('Date')

ax.set_ylabel('Revenue ($)')

ax.legend()

ax.grid(True)

# 7. Return the visualization object to Excel

# Excel renders this Matplotlib figure as an image in the cell.

fig

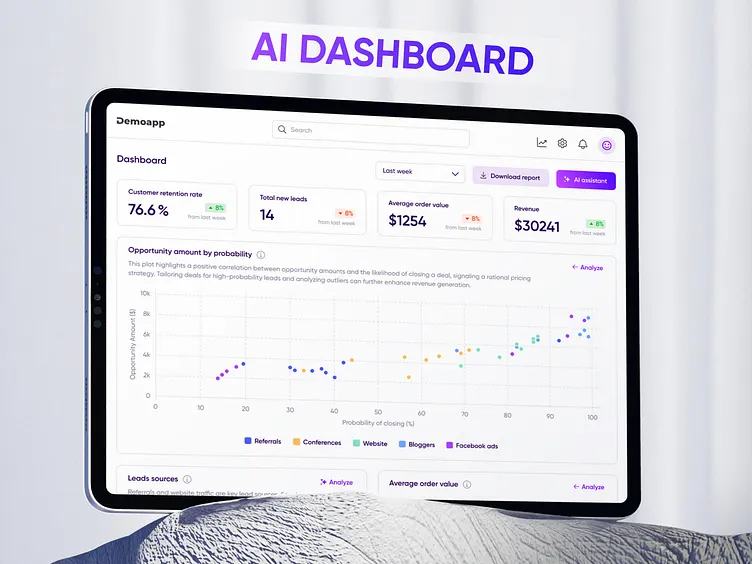

The output of this single prompt is a professional-grade chart embedded directly in the worksheet, clearly showing historical data and the projected forecast. The analyst can then ask follow-up questions, like “Now, return the forecasted values in a table,” and the AI would generate a new script that outputs the `forecast_values` DataFrame to the grid. This interactive, conversational approach to data analysis is a game-changer for productivity.

Democratizing Data Science: A Paradigm Shift

The implications of this integrated, AI-driven analytical capability are far-reaching, impacting professionals across the data spectrum and changing how businesses approach data-driven decision-making.

For the Excel Power User

For millions of analysts who live and breathe Excel, this technology opens doors to analytical techniques that were previously out of reach without extensive retraining. They can now perform sophisticated tasks like sentiment analysis on text data, run regression models to identify key business drivers, or create complex visualizations like heatmaps and violin plots—all without leaving their familiar spreadsheet environment. The AI acts as a co-pilot and a tutor, generating code that not only solves the problem but also teaches Python concepts along the way.

For the Python Data Scientist

This integration is not just for beginners. For seasoned data scientists, it streamlines collaboration and reporting. Instead of exporting data to a CSV, analyzing it in a Jupyter Notebook, and then pasting static charts into a PowerPoint deck, they can now build their models directly within the same Excel file their business stakeholders use. This creates a single source of truth where the analysis is live, reproducible, and transparent. It dramatically accelerates the prototyping of dashboards and reports, as the powerful Python backend can feed results directly into Excel’s native charting, pivot tables, and conditional formatting features.

For the Business

From a business perspective, this fusion translates into significant competitive advantages. It fosters a more data-literate culture by making advanced analytics more accessible. Decision-making cycles are shortened because insights can be generated in minutes, not hours or days. Furthermore, it enhances governance and reproducibility. An analysis contained within a Python script in an Excel file is far more transparent and auditable than one built from a complex, undocumented chain of manual formula manipulations, reducing the risk of human error.

Navigating the New Landscape: Tips and Considerations

While this new paradigm is incredibly powerful, users should adopt best practices and be aware of its current limitations to leverage it effectively.

Best Practices for Success

- Structure Your Data: Always format your raw data as official Excel Tables. This provides a clean, named reference (e.g., `xl(“SalesData”)`) for your Python code, making it more readable and robust against changes in worksheet structure.

- Don’t Trust Blindly: Treat the AI as a highly skilled assistant, not an infallible oracle. Always take a moment to review the Python code it generates. Understanding the logic ensures the analysis is correct and helps you learn in the process.

- Comment and Document: For complex analyses, add comments within your Python code. This makes the workbook easier for colleagues (and your future self) to understand and maintain.

- Separate Logic and Presentation: Use one set of cells for your Python calculations and a separate area for presentation (charts, summaries, etc.). This keeps your workbook organized and easy to navigate.

Current Limitations and Future Outlook

As a cloud-based service, this functionality requires a stable internet connection. The Python environment is managed by Microsoft, meaning you are limited to the pre-installed libraries and cannot `pip install` custom or niche packages. While performance is excellent for most business datasets, extremely large-scale computations (involving gigabytes of data) are still better suited for dedicated, high-performance computing environments.

Looking ahead, the python news in this space is likely to accelerate. We can anticipate the inclusion of more libraries, deeper integration with other tools in the Power Platform ecosystem, and even more sophisticated AI capabilities for generating complex, multi-step analytical workflows. The line between spreadsheet and data science platform will continue to blur, creating a hybrid tool that offers the best of both worlds.

Conclusion: A New Era of Accessible Analytics

The deep integration of an AI-powered Python engine within Excel is not merely an incremental feature update; it is a transformative leap that redefines the boundaries of spreadsheet software. By combining the accessibility and familiarity of Excel with the analytical depth of the Python ecosystem, it creates an environment where advanced data science is no longer the exclusive domain of specialist programmers. This powerful synergy empowers business users to answer more complex questions, enables data scientists to collaborate more effectively, and allows organizations to cultivate a more robust data-driven culture. This fusion of technologies marks the beginning of a new era in business intelligence—one that is more powerful, more accessible, and more collaborative than ever before.