Modern Python Package Management

Python’s popularity is undeniable, but for years, its package management story was a source of frustration for developers. The classic combination of pip and a requirements.txt file, while simple to start with, often led to the dreaded “dependency hell”—a state where conflicting package versions create environments that are difficult to reproduce and maintain. This chaos spawned “it works on my machine” syndromes and made deploying applications a game of chance. Fortunately, the Python ecosystem has evolved dramatically. Modern tools have emerged to bring order, predictability, and a streamlined developer experience to package management.

This article provides a comprehensive guide to modern Python package management. We will explore the limitations of the traditional approach, dive deep into the powerful features of tools like Poetry, Pipenv, and pip-tools, and demystify core concepts like dependency resolution and lock files. By understanding these tools and principles, you can create robust, reproducible, and easily maintainable Python projects, whether you’re building a simple script, a complex web application, or a distributable library. This shift towards more structured tooling is one of the most significant pieces of python news for developers in recent years, fundamentally improving project quality and collaboration.

The Old Guard: Understanding the Limitations of pip and requirements.txt

To appreciate the innovation of modern packaging tools, we must first understand the problems they were designed to solve. For over a decade, the de facto standard for managing Python dependencies involved pip, virtualenv (or the built-in venv), and a requirements.txt file. While this toolchain is functional, it has several inherent weaknesses that become more pronounced as projects grow in complexity.

The Simplicity and Pitfalls of requirements.txt

At its core, a requirements.txt file is a simple list of packages to be installed. A common workflow is to create a virtual environment, install packages with pip install, and then capture the environment’s state with:

pip freeze > requirements.txtThis command generates a file with the exact versions of every package currently installed. While this seems to guarantee reproducibility, it introduces two major problems:

- Lack of Dependency Distinction: The frozen file makes no distinction between the packages you directly installed (e.g.,

djangoorpandas) and the transitive dependencies they brought in (the dozens of other packages they rely on). This makes the project’s true top-level dependencies unclear. If you want to upgrade or remove a package, it’s difficult to know which of its sub-dependencies can also be safely removed. - Non-Deterministic Installs: A more subtle issue arises if you create your

requirements.txtby hand, listing only your direct dependencies (e.g.,requests). When a teammate runspip install -r requirements.txt, pip will install the latest version ofrequeststhat satisfies the requirement. If a new version ofrequestswas released between you writing the file and your teammate installing it, you will both end up with different environments, potentially leading to bugs. Even pinning a top-level dependency (requests==2.28.1) doesn’t solve this, as its sub-dependencies might not be pinned, allowing them to vary between installations.

The Virtual Environment Disconnect

Virtual environments are a cornerstone of good Python development, isolating project dependencies from the global Python installation. Tools like venv are essential. However, they are fundamentally separate from pip. The developer is responsible for a multi-step, manual process: create the environment, activate it, install packages, and remember to deactivate it when finished. It’s easy to forget to activate the environment and accidentally install packages globally, polluting your system’s Python and breaking the project’s isolation. This disconnect between the environment manager and the package installer adds cognitive overhead and is a frequent source of error for newcomers and experienced developers alike.

A Deep Dive into Modern Python Packaging Tools

Modern tools address the shortcomings of the traditional workflow by integrating virtual environment management, dependency resolution, and project specification into a single, cohesive experience. They introduce concepts like lock files for deterministic builds and use standardized project files.

Pipenv: The “Official” Recommendation

Created by the Python Packaging Authority (PyPA), Pipenv was one of the first tools to gain widespread adoption as a modern alternative. It aims to bring the best of package managers from other languages (like npm or bundler) to Python.

- Core Concepts: Pipenv uses two key files:

Pipfile: This file replacesrequirements.txt. It’s where you declare your direct dependencies, separating them into default packages ([packages]) and development-only packages ([dev-packages]).Pipfile.lock: This is the deterministic lock file. It contains the exact versions and hashes of every single package (direct and transitive) needed to build the environment. You should commit this file to version control.

- Workflow: Pipenv automatically creates and manages a virtual environment for your project.

# Add a new package pipenv install requests # Activate the virtual environment shell pipenv shell # Install all dependencies from Pipfile.lock pipenv sync # Install development dependencies as well pipenv sync --dev

By combining package and environment management, Pipenv simplifies the developer workflow significantly. The clear separation of dependencies in the Pipfile makes projects easier to reason about.

Poetry: The All-in-One Powerhouse

Poetry takes the integrated approach a step further, providing a single tool for dependency management, packaging, and publishing. It is widely praised for its powerful dependency resolver and its adherence to modern Python standards.

- Core Concept: Poetry uses the standardized

pyproject.toml(PEP 518) file to manage all project metadata, dependencies, and tool configuration. This single file replacessetup.py,requirements.txt, and other configuration files.# Example pyproject.toml section for Poetry [tool.poetry] name = "my-awesome-project" version = "0.1.0" description = "A project managed by Poetry" authors = ["Your Name <[email protected]>"] [tool.poetry.dependencies] python = "^3.9" fastapi = "^0.85.0" [tool.poetry.group.dev.dependencies] pytest = "^7.1.3" black = "^22.10.0" - Workflow: Like Pipenv, Poetry manages virtual environments automatically. Its command-line interface is comprehensive and intuitive.

# Add a new package poetry add pandas # Activate the virtual environment shell poetry shell # Install all dependencies from poetry.lock poetry install # Build your project for distribution poetry build # Publish your project to PyPI poetry publish

Poetry’s key advantage is its robust dependency resolver, which is often faster and more capable of resolving complex dependency graphs than its competitors. Its all-in-one nature makes it an excellent choice for both application developers and library authors.

pip-tools: The Unbundled, Unix-like Approach

For those who prefer a more minimalist approach that enhances the existing pip workflow rather than replacing it, pip-tools is an excellent choice. It adheres to the Unix philosophy of doing one thing well.

- Core Concepts: pip-tools provides two main commands:

pip-compile: This command reads arequirements.infile (where you list your direct dependencies) and generates a fully-pinnedrequirements.txtfile, complete with comments explaining which top-level package required each transitive dependency.pip-sync: This command synchronizes your virtual environment to match the exact contents of the compiledrequirements.txt, adding missing packages and removing extraneous ones.

- Workflow: You manage your own virtual environment with

venv, but use pip-tools to manage the requirements files.# In requirements.in: # flask # requests # Compile the lock file pip-compile requirements.in # This generates requirements.txt with pinned versions. # Now, sync your environment. pip-sync

pip-tools is perfect for developers who want deterministic builds without adopting a fully integrated tool that manages virtual environments for them. It provides the crucial locking mechanism while keeping the rest of the workflow familiar.

Under the Hood: Why Deterministic Builds Matter

The common thread among all these modern tools is their ability to produce deterministic builds through dependency resolution and lock files. These concepts are critical for professional software development.

What is Dependency Resolution?

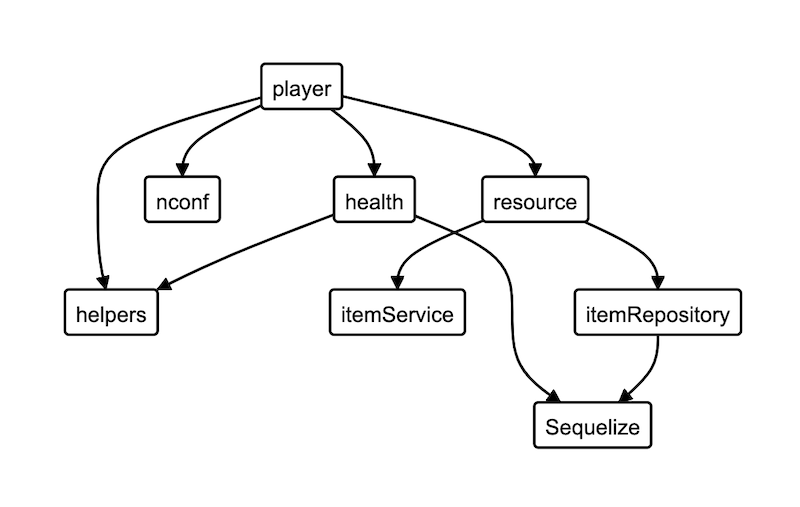

Dependency resolution is the process of finding a set of package versions that satisfies all constraints for a project. Imagine your project requires LibraryA>=2.0 and LibraryB. However, LibraryB itself requires LibraryA<3.0. A dependency resolver must navigate this graph of requirements to find a compatible version of LibraryA (e.g., 2.5). A naive resolver, like the one in older versions of pip, might install the latest version of LibraryA first (say, 3.1), only to fail later when it discovers the conflict from LibraryB. Modern resolvers, like the one in Poetry, build a complete graph of all dependencies first, allowing them to find a valid installation set more reliably and efficiently.

The Power of the Lock File

A lock file (poetry.lock, Pipfile.lock, or a compiled requirements.txt) is the output of a successful dependency resolution. It is a snapshot of the *exact* versions of every single package—both direct and transitive—that make up your environment. It also includes cryptographic hashes of each package file.

This provides two immense benefits:

- Reproducibility: When a new developer or a CI/CD pipeline installs dependencies using the lock file (e.g., with

poetry installorpipenv sync), they are guaranteed to get the bit-for-bit identical environment as everyone else on the team. This completely eliminates “it works on my machine” bugs caused by differing package versions. - Security: The stored hashes ensure that the packages you are installing have not been tampered with. If the hash of a downloaded package doesn’t match the hash in the lock file, the installation will fail, protecting you from potential supply-chain attacks.

Practical Guidance: Which Tool Should You Use?

With several excellent options available, choosing the right tool depends on your project’s needs and your team’s preferences. There is no single “best” tool, only the best tool for a given context.

When to Choose Poetry

Poetry is arguably the most powerful and complete solution. It is an excellent choice if:

- You are starting a new project from scratch.

- You are developing a library that will be published to PyPI.

- You want a single, unified command-line interface for all packaging and environment tasks.

- Your project has a complex set of dependencies that could benefit from its advanced resolver.

- You want to align with the modern

pyproject.tomlstandard, which is becoming the central point for Python project configuration. The standardization around this file is important **python news** for the long-term health of the ecosystem.

When to Choose Pipenv

Pipenv is a solid and mature tool, particularly well-suited for application development. Consider Pipenv if:

- You are primarily developing applications that will not be published as libraries.

- Your team values the official endorsement from the PyPA.

- You want an integrated tool but find Poetry’s feature set to be more than you need.

When to Choose pip-tools

pip-tools offers a great middle ground, adding determinism without a complete workflow overhaul. It’s the right choice if:

- You want to add lock file capabilities to an existing project that already uses

requirements.txt. - You prefer to manage your virtual environments manually with

venv. - You value simplicity and a minimal toolset that complements, rather than replaces, standard tools like

pip.

The Future of Python Packaging

The transition from a loose requirements.txt to a modern, lock-file-based workflow represents a significant leap forward in Python development best practices. By embracing tools like Poetry, Pipenv, or pip-tools, you are not just adopting a new command; you are adopting a philosophy of reproducibility, security, and clarity. These tools eliminate a whole class of common errors, allowing developers to focus on writing code instead of debugging environments.

The choice between them often comes down to a preference between an all-in-one, opinionated framework (Poetry) and a more unbundled, composable approach (pip-tools). Regardless of your choice, the key takeaway is to use a dependency resolver and a lock file. Doing so will make your projects more robust, your team more efficient, and your deployments far more predictable. The continued innovation in this space ensures that managing Python projects will only become easier and more powerful over time.