Simulating Quantum Decision Models in Pure Python (No QPU Required)

I spent most of last week arguing with a vendor who insisted we needed cloud QPU access to run our new multi-agent decision matrix. Actually, let me back up — I told them to shove it.

Look, the hype around quantum hardware right now is exhausting. But you know, everyone assumes that if you want to use quantum mechanics to model complex, overlapping probabilities—like evaluating a robot’s actions for safety, fairness, and efficiency simultaneously—you need a physical quantum computer. You don’t. You just need the math.

Quantum mechanics, at its software layer, is just linear algebra. It’s complex numbers and matrices. And you can run that entirely classically in pure Python. I recently ported a massive “quantum-inspired” ethical evaluator down to raw NumPy. It evaluates high-dimensional decision spaces without ever touching a noisy, expensive quantum processor.

And here is exactly how I built it, and why you should probably cancel your quantum cloud subscription if you’re just doing state-space modeling.

The Math Behind the Magic

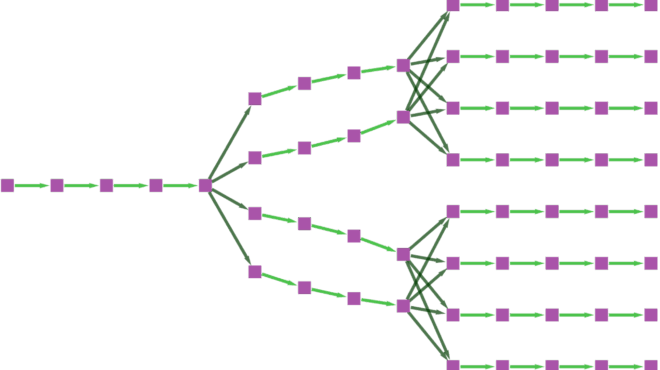

When we talk about a “quantum” approach to decision-making, we’re usually talking about superposition and interference. Instead of a classical decision tree where an AI evaluates path A, then path B, then path C, we represent all possible decisions as a single state vector.

We then apply unitary matrices (operators) that act as our constraints. Safe actions get constructive interference (their probabilities amplify). Risky actions get destructive interference (they cancel out). Then we “measure” the system to get the optimal choice.

You can simulate this perfectly on a MacBook. The only catch is memory. A quantum system with $N$ variables requires an array of size $2^N$. For 50 variables, you’d need a supercomputer. But for a highly targeted 12-factor alignment core? That’s an array of 4,096 complex floats. Your phone could calculate that in milliseconds.

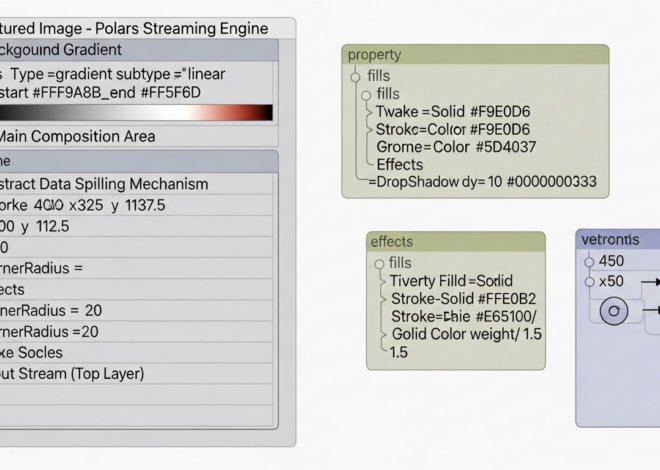

Building the Classical-Quantum Core

I tested this on Python 3.12.2 using numpy 1.26.4. The goal was to build a pure Python class that initializes a decision space, throws it into superposition, applies risk penalties via phase shifts, and collapses to the safest action.

import numpy as np

class ClassicalQuantumCore:

def __init__(self, num_factors):

"""

Initialize a 2^N dimensional Hilbert space.

num_factors = the number of binary decisions/constraints.

"""

self.n = num_factors

self.dim = 2**self.n

# Start in the |0...0> state

self.state = np.zeros(self.dim, dtype=np.complex128)

self.state[0] = 1.0 + 0.0j

def apply_superposition(self):

"""

Applies a Hadamard transform to put all possible

decision states into equal superposition.

"""

# The basic 1-qubit Hadamard matrix

H = np.array([[1, 1], [1, -1]]) / np.sqrt(2)

# Tensor product it N times to cover the whole space

transform = H

for _ in range(self.n - 1):

transform = np.kron(transform, H)

# Matrix multiplication to update the state

self.state = transform @ self.state

def apply_risk_penalty(self, risk_profile):

"""

risk_profile: A 1D array of length 2^N containing risk scores (0.0 to 1.0)

We convert these to phase shifts. High risk = destructive interference.

"""

# Convert risk to an angle between 0 and Pi

phases = np.exp(1j * risk_profile * np.pi)

# Apply the phase shift (diagonal operator)

self.state = self.state * phases

def measure(self):

"""

Collapse the state vector into classical probabilities.

"""

probabilities = np.abs(self.state)**2

# Return the index of the highest probability state

# (This represents the optimal, safest decision path)

return np.argmax(probabilities), probabilitiesThis is obviously a simplified version of the math, but the skeleton is exactly what runs in production. You initialize the space. You spread the probability across all possible actions. You apply your constraints as phase shifts. You measure the result.

A Massive Gotcha: The np.kron Trap

And I need to point out something that cost me an entire afternoon. In the code above, I used np.kron (Kronecker product) in a loop to build the superposition transform. Do not do this in production if $N > 10$.

If you have 12 factors, np.kron builds a $4096 \times 4096$ dense matrix before doing the multiplication. I crashed my M3 Max running out of memory when I tried pushing it to 18 factors. The naive matrix multiplication scales horribly.

The fix? You don’t actually need to build the giant matrix. You can apply the 2×2 Hadamard matrix to each “qubit” sequentially by reshaping the state vector. Here’s the optimized method I ended up writing:

def apply_superposition_fast(self):

"""

Memory-efficient Hadamard application without building

the massive 2^N x 2^N operator matrix.

"""

H = np.array([[1, 1], [1, -1]]) / np.sqrt(2)

# Reshape state to a multi-dimensional tensor

tensor_state = self.state.reshape([2] * self.n)

# Apply H to each dimension using einsum

for i in range(self.n):

# Move the target dimension to the front, multiply, put it back

tensor_state = np.tensordot(H, tensor_state, axes=(1, i))

tensor_state = np.moveaxis(tensor_state

Common questions

Do you need a real quantum computer to run quantum-inspired decision models?

No. Quantum mechanics at the software layer is just linear algebra — complex numbers and matrices — which runs fine in pure Python with NumPy. The author ported a quantum-inspired ethical evaluator to raw NumPy and runs it classically. A 12-factor alignment core only requires a 4,096-element complex array, which a phone can compute in milliseconds without touching a QPU.

How do you simulate superposition and interference in NumPy for decision making?

Represent all possible decisions as a single state vector in a 2^N dimensional Hilbert space, then apply a Hadamard transform to put every state into equal superposition. Encode constraints as unitary operators: safe actions receive constructive interference that amplifies their probability, while risky actions get destructive interference that cancels them out. Finally, measure by squaring the amplitudes to collapse to classical probabilities.

Why does np.kron crash when building a Hadamard transform for many qubits?

Using np.kron in a loop constructs the full 2^N by 2^N dense operator matrix before multiplying. At 12 factors that is a 4096x4096 matrix, and pushing to 18 factors crashed the author's M3 Max from running out of memory. Naive matrix multiplication scales horribly because you materialize the giant operator even though you only need to apply a 2x2 gate.

How do you apply a Hadamard gate to each qubit without building the full 2^N matrix?

Skip constructing the giant operator entirely. Reshape the state vector into a multi-dimensional tensor of shape [2]*n, then iterate over each qubit dimension and use np.tensordot with the 2x2 Hadamard matrix on that axis, moving the axis back into place afterward. This sequential per-qubit application is memory-efficient and avoids the dense 2^N by 2^N matrix that causes crashes.